If you’re like me, there’s a perfect hour that happens right after the kids go to sleep. Your spouse settles down to read a book, and you have the remote control to the television all to yourself. With no plan for what to watch, how do you find something that you’re interested in seeing?

Welcome to the tradition of channel surfing, where millions of people spend the twilight hours of their daily lives perusing 300 channels of television programming, one channel at a time. The process of channel surfing provides little time for one to consider the value of the content on a particular channel.

We evaluate a program based on a split second of content to see if it merits further investigation. And all too often, we tire of finding quality late-night programming and fall asleep before the TV dim only to awake several hours later to the start of a new work day.

For us security practitioners, there is a similarity between channel surfing and the banal process of surfing log files. With multitudes of security systems generating thousands of log entries (or more) at points across the network, how can you find the stuff that’s interesting and worth investigating?

A well-trained eye can certainly spot unusual activity, but we’re long past the realm of being able to do it by looking at the log files. There’s just too much data to correlate, located in too many different places. It’s spread across too many systems as well, with the clues sprinkled across the anomalous readings of multiple log files.

In a recent article, CSO Magazine pointed out that the scope of the problem is vast. 60% of the respondents in a survey conducted by Enterprise Management Associates indicated that they were gathering more than 50GB of log data and over 166 million events generated per day. And what’s even more interestingly, is that respondents wanted more: if given the option to gather and store more log data, they would take it.

What’s needed is a way to bring all of this data together into something that is actionable. Instead of looking at the logs themselves, organizations need tools to interpret and make sense of security actions happening across their network. The logs are where you go when you need the details, but not necessarily the place you should spend all of your time.

The Palo Alto Networks next-generation firewall takes a very different approach to security that starts with the premise of determining which applications should be allowed in the enterprise, and from there, applies correlation to who can use it and what content may pass. As a result, it provides a level of visibility over security events in a single location, tying together a view over issues that were traditionally littered across the network in different systems.

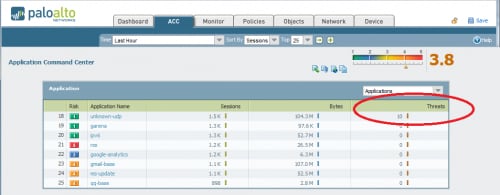

Let’s take a look at the Application Command Center, which provides a high level view of activity passing through the firewall. The Application Command Center summarizes the log information in a way to make it digestible and actionable.

The first thing that catches the eye is the fact that the ACC shows sessions, bytes and threats on the same screen. For organizations that rely on a separate IPS for vulnerability protection, this information would not be present, nor correlated, to firewall log information. Line #18, “Unknown-UDP” is something that is unusual, because this indicates traffic that was not identified as a major well-known application. While perhaps benign, it definitely merits further investigation. Especially troubling is the fact that threats have been identified within this application traffic.

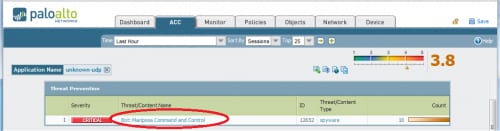

Clicking on the link to “unknown-udp”, I can drill down a bit further. At this point, I can further refine where this unknown traffic is coming from, but I first want to know more about the threats detected.

Turns out that there is Mariposa botnet activity on the network, and it’s using UDP to communicate to a command & control center. Mariposa’s C&C servers were taken down long ago, but it looks like there’s still infected endpoints on this network. I didn’t have to flip between two different systems like a traditional firewall/IPS combination, it’s all here on one screen.

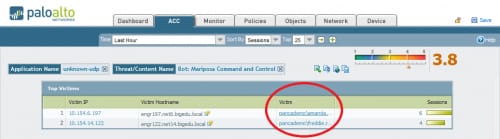

Clicking on the link for the Mariposa traffic, and Application Command and Control now filters on both “unknown-udp” and “Bot: Mariposa Command and Control”.

Now I have the name of the people with the infected machines using data from Active Directory (it works with other LDAP repositories as well). Again, no need to log into a separate system to try to find out which user is at a particular IP address.

Getting back to log files, we’re actually just one click away. I can now click a button on the control panel that pulls the material specific to the current investigation without having to generate a complicated SQL query. The button takes care of providing the log query that I need and pulls the information from the log file, and I can look at log entries or packet captures at that point.

That’s just one example of how we went from a high level overview, to spotting something that required investigation, and then locating the specific users involved. It didn’t require a lot of time or effort, because we used tools to spot issues and we didn’t have to correlate the data across different systems to resolve the problem.

If you are interested in seeing how third-party tools can work together with the Palo Alto Networks next–generation firewall, we have a number of partnerships with leading vendors. For further information about how to use Palo Alto Networks with tools available from our partners, take a look at the following solution briefs:

- Splunk App for Palo Alto Networks

- ArcSight Enteprise Threat & Risk Management Integration

- LogRhythm Log Management & SIEM Integration

- NitroSecurity NitroView Integration

- Q1 Labs Qradar Integration

- Symantec Security Information Manager Integration

- RSA enVision Integration

With the Palo Alto Networks next-generation firewall, you can take steps to get your log surfing habits under control. With less time spent looking ‘line by line’ at system logs, you can perhaps dedicate more time to your TV habits.