Sikorski's Strategy with AI

“AI’s Impact in Cybersecurity” is a blog series based on interviews with a variety of experts at Palo Alto Networks and Unit 42, with roles in AI research, product management, consulting, engineering and more. Our objective is to present different viewpoints and predictions on how artificial intelligence is impacting the current threat landscape, how Palo Alto Networks protects itself and its customers, as well as implications for the future of cybersecurity.

We recently chatted with Michael Sikorski, the CTO of Unit 42 who leads Threat Intelligence and Engineering for the division.

As the hype around AI continues to ramp up, cybersecurity practitioners are trying to separate reality from fiction when it comes to how artificial intelligence will impact their field. Our discussion includes some candid predictions on AI's near and long-term implications for cyberattacks and defense.

The Phishing Threat Becomes Much Stronger

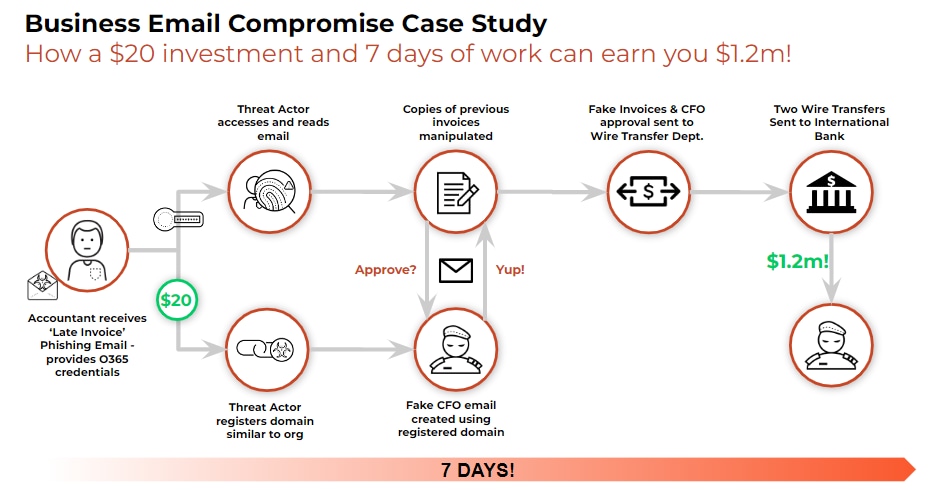

In the near-term of the next 6-12 months, Sikorski believes the top way AI will be leveraged offensively is for supercharging social engineering attacks, like phishing and business email compromise (BEC). As he bluntly states, "I think this will be short-lived and phishing will take the number one spot again due to AI."

AI language models can study a target's entire email history and communication patterns to then craft perfectly authentic-sounding phishing messages. Sikorski explains:

"They can build trust very quickly. You see emails going back and forth where they're talking about their dog or their family and the LLM could even replicate that, build the trust and then say, 'Oh yeah. Can you fill out that invoice? Just like you did 6 months ago, and they're more likely to engage, right? Because they're more easily fooled."

Sikorski believes this scalable, automated phishing threat is already starting to happen and will only grow more prevalent in the short term as "their standard, go-to" approach.

Adversaries Look to Generate Malware and Poison AI Training Data and Systems

As we look 12 months to a few years out, Sikorski expects malicious actors to evolve their AI offensive capabilities in two key areas:

1) Crafting malware using AI language models trained on existing malware code to stitch together new strains that can bypass detection. "We're trying to actually create malware using LLMs and then feeding it and throwing it at our products to see how well they do," he notes about the proactive defense work of Palo Alto Networks.

2) Attacking the AI/ML systems themselves through techniques like prompt injection and poisoning training datasets to manipulate the outputs. "I think we'll even see attacks going after training data poisoning. So I think the midterm is going to be more going after the LLMs because it's a new technology."

AI Fuels Automated, Scalable Attack Campaigns

Sikorski's biggest concern looks 5-plus years into the future, when AI is allowing massively scalable, autonomous attack campaigns against thousands or millions of targets simultaneously, far beyond the human-level campaigns we've seen so far. He warns:

"Imagine with AI, they have the ability to make decisions about who to target, how to move laterally, and grab the valuable data … in an automated fashion. I think that's where this is going. Where the idea of having a fully automated red team, and then therefore also a fully automated attacker."

Sikorski compares it to the SolarWinds supply chain attack, noting "... even with their military, they still had limited resources and operators" and could only operationalize a subset of backdoors despite the widespread prevalence of the corrupted software.

"Now imagine with AI, they have the ability to … do all that in an automated fashion … you can imagine, instead of just attacking hundreds of networks, they attack thousands and install their hooks for the future."

The Bright Side: AI-Enabled Defense at Scale

However, Sikorski does see an optimistic side for AI boosting defensive cybersecurity capabilities, as well. He thinks AI/ML advancements may even drive down the total number of exploitable vulnerabilities in software through better automated testing and remediation at scale during development. Sikorski reasons:

"I think on the exploit and zero day generation, I think there's some argument to be had that software developers are also going to leverage this technology and try and fix the vulnerabilities before bad guys find them."

This is an arms race where defenders need to go all in to win the race.

For security operations, Sikorski believes AI/ML will help teams focus more bandwidth on proactive defense efforts, like threat hunting rather than reactive triage:

"If we could automate away all of this stuff that SOCs know about …then we can redirect our SOC into doing things like hunting. And that takes a lot more creativity to go and find new attacks that we don't know about."

The key for security leaders, according to Sikorski, is closely tracking KPIs showing how much AI helps "drive down your costs" and "what percentage of the time are they finding new things?" He advises:

"If that's going up over time, then that means your team is not just spending on triaging known attacks, triaging false positives all day long."

As AI cyber offense and defense capabilities accelerate in parallel, the pragmatic voices of real-world practitioners, like Michael Sikorski, will be critical for cybersecurity teams to operationalize AI's potential benefits while staying clear-eyed on the emerging threats.