The CISO's Dilemma: When Trust is the Attack Vector

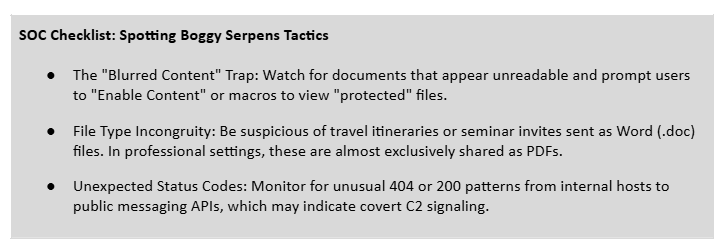

The email that started this investigation didn't look suspicious. It came from a real account, from a real government ministry, and it landed in multiple inboxes across the Middle East exactly the way a legitimate diplomatic communication would. No spoofed domain. No known-bad IP. Nothing a signature was going to catch.

That's the point.

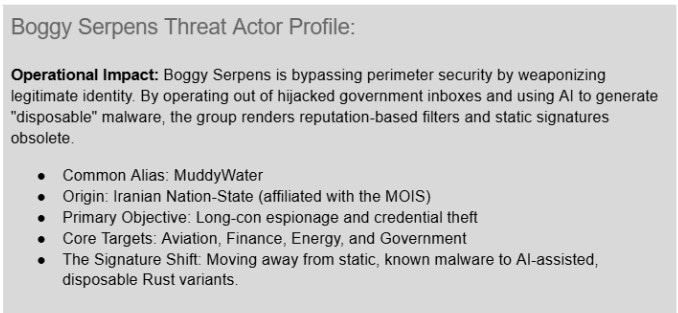

Boggy Serpens, known globally as MuddyWater, is an Iranian nation-state cyberespionage group that Unit 42 and Cortex threat researchers have tracked since 2017.

In the fourth episode of "Threat Vector Investigates," Peter Havens and Nathaniel Quist, Threat Research Manager for Cortex, break down the group's latest campaign: a sophisticated evolution that didn't hack in, it logged in. And buried inside the malware the team pulled apart, they found something you don't usually see in nation-state code: fingerprints of AI-assisted development.

This group isn't just getting more sophisticated. They're iterating faster than traditional detection can keep pace with.

The "Trusted Friend" Trap

Forget broad phishing blasts. Boggy Serpens performs precision espionage.

The core of this campaign is what the research team calls the Identity Crisis. Rather than spoofing government email addresses, the group compromises actual government inboxes and operates from inside them. Your "safe sender" list, the one IT admins tell employees to trust, becomes the vulnerability.

The research team tracked a single energy company targeted in four distinct waves over six months. Engineers received pipeline integrity reports. HR received resumes. One individual got a fake flight itinerary, built with a real name and real travel window, indistinguishable from a legitimate corporate booking. Each lure delivered a completely different malware payload.

Once a target opens the document, they typically see a blurred, "protected" file asking them to enable content to view it. The moment they click, a Visual Basic Application (VBA) macro executes and fetches the second-stage payload, while a fixed (and legitimate looking) document appears on screen. The malware has already beaconed back to a Telegram bot or C2 server. This isn't automated spam. It's manual, focused espionage

The Rust Revolution and the AI Smoking Gun

Historically, groups like Boggy Serpens leaned on .NET and PowerShell. The latest campaign is different.

The group has shifted to Rust, a language that has become the new gold standard for malware authors. It's memory-safe, fast, and significantly harder to reverse-engineer than older languages. The research team identified new malware families written in Rust, including LampoRAT and BlackBeard: custom Remote Access Trojans (RAT) capable of stealing credentials, capturing screenshots, running commands, and establishing full access on a compromised host.

But there was a more unexpected finding inside the code.

In the logs of LampoRAT, the team found checkmark emojis (✅) and X marks (❌) used as status indicators. Human malware authors write terse functional logs: [+] Success, [-] Error. Large language models, when prompted to make a script "user-friendly," produce exactly this kind of output.

The implication: Boggy Serpens is using generative AI to iterate on their malware faster than analysts can reverse-engineer it. Because the code is regenerated fresh each wave, it looks different every time, bypassing signature-based detection almost by design.

The Real Risk: We’re Entering the Era of the Disposable Hash

The discovery of AI-generated code snippets in this campaign is more than just a technical curiosity. It signals a shift toward a high-velocity attack model where malware essentially becomes disposable.

In a traditional SOC, we rely on the stability of a threat. Once a file is identified as malicious, its hash is blocked and the threat is neutralized across the environment. But when an adversary uses AI to automate the repetitive parts of coding, they can iterate almost instantly. They aren't just writing one version of LampoRAT. They are generating dozens of variants that perform the same malicious actions but look completely different to a scanner.

If your defense strategy is built on blocking yesterday’s hashes, you are playing a game of whack-a-mole that is impossible to win. To catch an actor like Boggy Serpens, the focus has to shift from what a file is to what a file does.

This is where behavioral detection becomes the only viable path forward. By monitoring for the specific "tells" our researchers found, such as unexpected parent-process injections or unusual Telegram API traffic, Cortex XDR identifies the underlying pattern of the attack. The AI can reshuffle the code as many times as it wants, but the behavior stays the same. That is where we catch them.

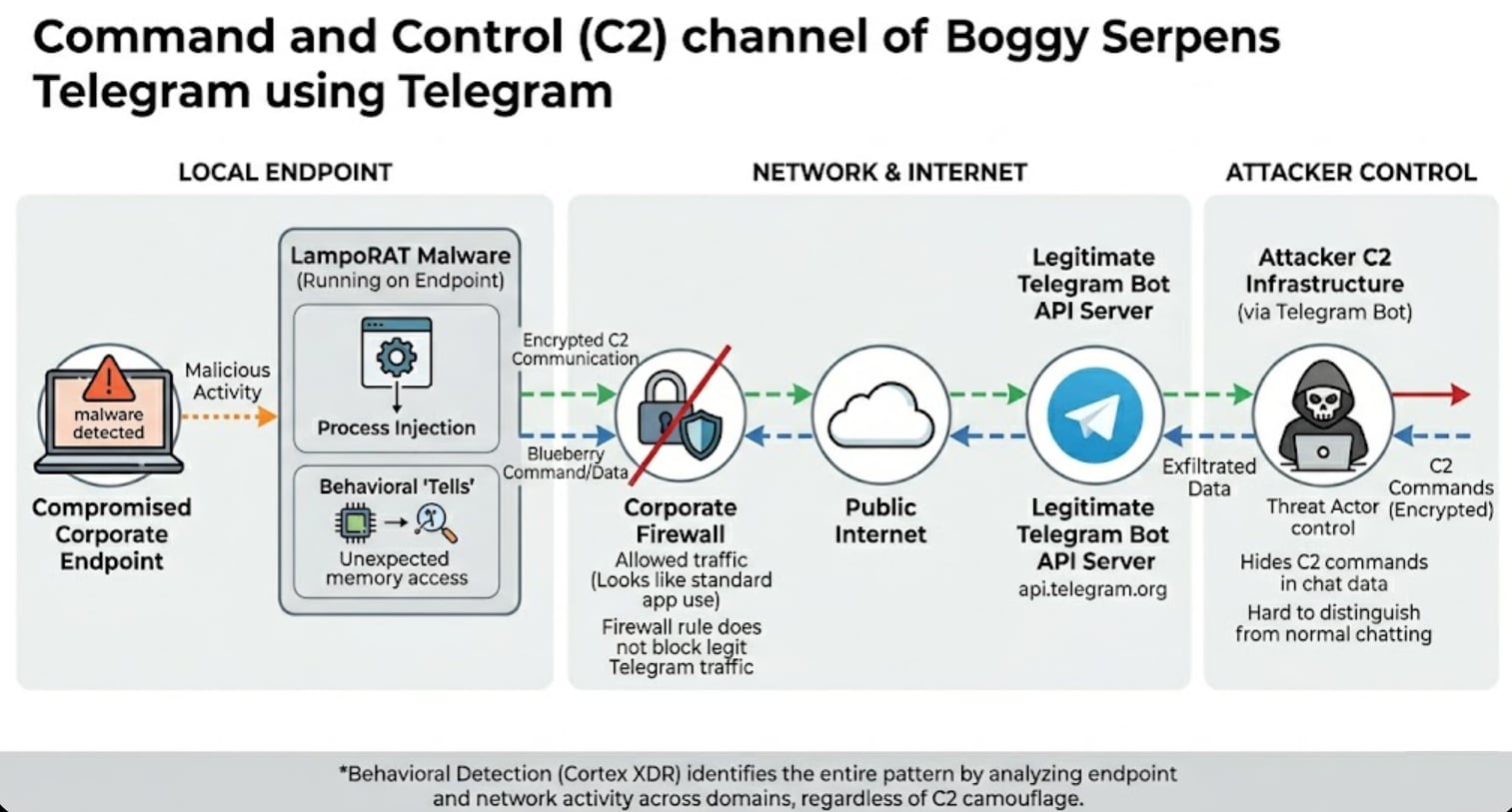

Hiding in Plain Sight: The C2 Problem

Even when the malware itself changes, the communication channel has to go somewhere. Boggy Serpens' answer is to hide inside infrastructure that organizations would never think to block.

Their primary channel is Telegram. To a network administrator, it looks like a user chatting. Meanwhile, the attacker is sending commands through the Telegram API.

One of their tools, Nuso, goes further by using HTTP status codes as a covert signaling system. A 404 Not Found response tells the malware to delete itself and go dormant. A 200 OK might mean start exfiltration. The conversation happens in plain sight, through traffic that looks completely normal, on infrastructure that's globally trusted.

If you're only watching for bad IPs, you're missing the entire operation.

How Cortex XDR Stopped It

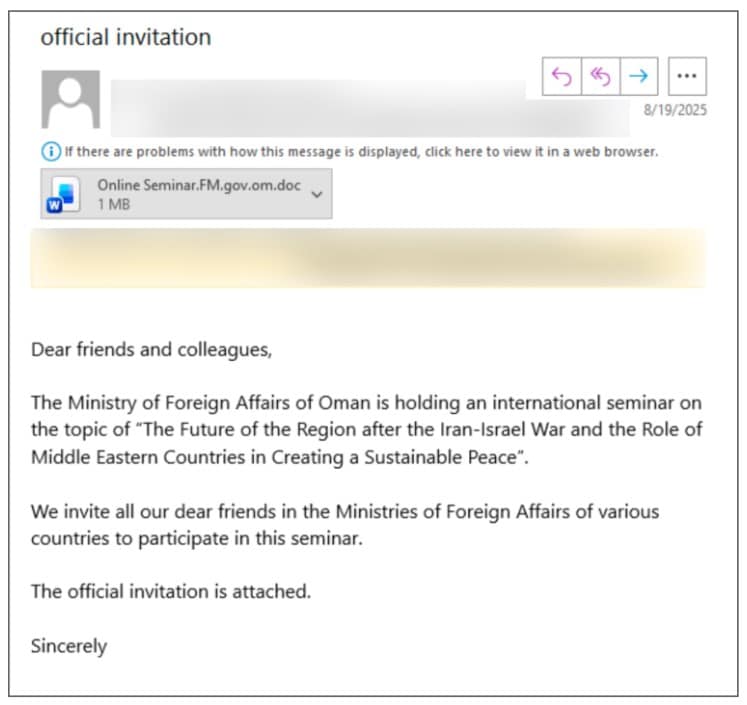

In August 2025, the group used a compromised mailbox inside the Omani Ministry of Foreign Affairs to push malicious documents to foreign ministries across the region. The lure was a seminar invitation, geopolitically timed and sent from a legitimate government address. By every traditional filter, that email passes cleanly.

What Cortex XDR caught was the behavior.

The Advanced Email Security module builds behavioral profiles of normal communication patterns for each organization. When a hijacked account suddenly sends a sustainability seminar invite or a pipeline report to an engineer who doesn't typically receive them, the system flags the anomaly, analyzing not just the sender but the content, sentiment, and tone of the message. An unusually urgent or coercive framing from an otherwise trusted diplomat? High-risk. A blurred document attachment from a sender who doesn't normally send attachments? Worth investigating.

But the detection doesn't stop at the inbox.

Cortex XDR's cross-domain correlation connects the email to the user's identity and their endpoint. When a Word document spawns PowerShell, or a Rust binary tries to establish outbound communication moments after a file opens, those signals combine. Individually, each observation is ambiguous. Together, they reveal a coordinated attack path. That's how two of the backdoor variants identified in this campaign, Phoenix and UDPGangster, were blocked.

For the Rust-based payloads, signatures are useless because the code looks different every wave. What doesn't change is the behavior: document drops a binary, binary tries to communicate outbound. The malware is new. The pattern isn't.

When a threat is confirmed, response is automated. Cortex doesn't just alert; it quarantines the malicious email from all inboxes, disables the compromised account, and isolates infected machines to stop lateral movement. Security teams wake up to a documented incident rather than an active breach.

What This Campaign Tells Us About the Threat Landscape

Boggy Serpens is a useful lens for where adversaries are right now.

The sophistication isn't just technical, it's architectural. These threat actors will lay dormant for weeks with access to a single compromised account before using it. Using lures tailored to the specific person and waiting for the right geopolitical moment. An AI-assisted development cycle that lets them iterate faster than manual analysis can keep pace with. And a deliberate design philosophy built around operating below the threshold of any individual detection surface.

The email looks clean. The endpoint activity looks like normal document behavior until it isn't. The C2 traffic looks like someone using Telegram. You have to connect all three to see what's actually happening.

The organizations that catch this aren't necessarily the ones with the most tools. They're the ones who can correlate signals across email, endpoint, and network fast enough to see the full picture before it becomes a breach.

What You'll See in the Video

The investigation from Peter Havens and Nathaniel Quist reveals how a nation-state group is weaponizing trust and AI to outpace traditional detection:

- How Boggy Serpens compromises legitimate government inboxes to bypass every standard email filter

- Why tailored lures built around real names, real travel windows, and real geopolitical moments are so difficult to catch

- The shift to Rust-based malware and what makes it significantly harder to reverse-engineer

- The AI fingerprints hidden inside LampoRAT's logs and what they tell us about how fast this group is iterating

- How Boggy Serpens uses Telegram and HTTP status codes to run command and control in plain sight

- Why cross-domain correlation across email, endpoint, and network is the only way to see the full attack picture

Watch the complete investigation to see how Unit 42 and Cortex researchers pulled apart the LampoRAT malware, identified the AI fingerprints, and traced the campaign infrastructure end to end.

Want to Dig In a Little More?

Read the full threat assessment here