The public cloud has rapidly moved past the novelty, curiosity stage to the business critical initiative stage for nearly every established

organization. In a 2016 IDC CloudView survey, 80% of the enterprises contacted were actively engaged in public-cloud projects. The driving forces are a realization that the public cloud is “enterprise ready” and the need to be more agile, more responsive and more competitive.

For those organizations that already have an existing physical IT infrastructure, the common starting point is a hybrid approach, which extends the existing data center into Microsoft® Azure™.

The VM-Series for Azure allows you to securely enable a hybrid cloud incorporating security concepts that includes segmentation policies, much like the prevention techniques used on your physical network. This document complements the VM-Series technical documentation with some added guidelines and recommendations that address common questions that arise during hybrid use-case deployments in Azure.

The Hybrid Cloud: Transition or End State?

The question of whether a hybrid approach is a transition or the end state is dependent upon your definition. In most cases, what begins as a transition toward “everything in the cloud” becomes an end state because the effort to remove or eliminate the data center assets is not possible for a variety of reasons. In other cases, the hybrid approach is by design – data remains on premise, while application processing is deployed in Azure. An alternative is the reverse scenario where data collection and pre-processing are done in the cloud, and then completed on premises. The data collection and pre-processing scenario is typically coupled with the need to address traffic and CPU bursting requirements.

An increasingly common hybrid cloud scenario is to use it as a means of managing separate application development, testing and production environments. Small pilot projects can easily be spun up, and because you’re using an IPsec VPN, you can expand your network from the private cloud into the public cloud seamlessly. An overlay network using VPNs not only provides privacy over shared networks but the VPNs also reduce the number of Layer 3 hops on the end-to-end network. This allows you to expand your internal IP address space into the public cloud using widely supported routing protocols.

As your application moves through the process, resources can be commissioned/decommissioned quickly and efficiently. Not only do you eliminate the need to invest in hardware for a short-term project, you can easily segregate the VNet from the internal network from a security standpoint while still allowing seamless traffic flow from a routing perspective. The development/testing VNet looks like an extension of your own data center but still has an easy point of demarcation for the purpose of security policy enforcement.

An added benefit to a hybrid approach is transparency between the public and private cloud. Routes for the directly attached networks in the public cloud can be redistributed into an OSPF process running in your existing data center. These routes can then be dynamically shared with your on-premises firewalls and routers via OSPF updates. The end result is that the traffic routed to a database server uses the exact same mechanism, regardless of whether the database subnet is on-site, in the private cloud, in the public cloud or both.

Regardless of whether it is a transition or an end state, the applications and data in your hybrid cloud need to be protected with the very same rigor that you might apply to your existing data center. Read more about hybrid clouds in Azure.

Azure Security: Who Does What?

From a security perspective, implementing a hybrid approach does not eliminate or minimize your cybersecurity challenges. The continual operation of your organization’s digital infrastructure is location agnostic. Your users, your customers, and your partners do not care where the applications and data reside. They expect them to work and to be secure, regardless of location. Unfortunately, attackers are also location agnostic. Regardless of the location – public, private cloud or physical data center – your applications and data are an attacker’s target, and protecting them in Azure introduces the same security challenges that are present within your on-premises network. These challenges include a lack of control over your network traffic based on the application and an inability to prevent cyberattacks.

Using the Azure infrastructure introduces a question every user must understand and answer: Who is responsible for protecting my applications and data in Azure? On the surface, the answer is simple: Microsoft is responsible for protecting the infrastructure, which would include elements such as the physical data centers powering the Azure environment, inclusive of compute, networking and storage resources and the standard Azure services. Your responsibility begins at your account level, and includes elements such as basic account security, security extending into your operating system and networking configurations, the services you deploy, and the data in your account. Before moving to the public cloud, it is critical that you and your decision-making team know and understand the security model for your selected vendor. Watch this brief Microsoft video to learn more about the security responsibilities in Azure.

Security Challenges in Azure

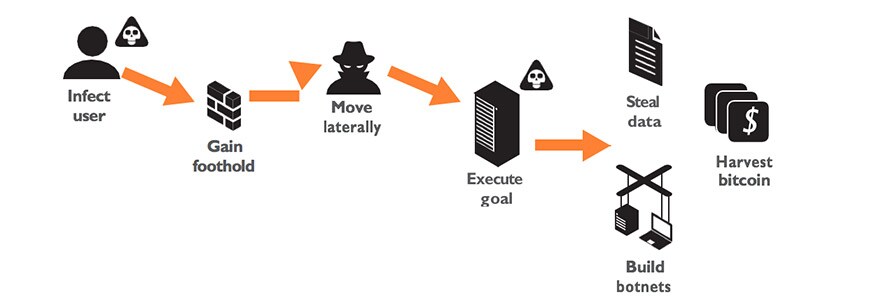

There is a common misconception that moving to the public cloud in general improves one’s security posture enough, to the point where it may be believed that additional cyberattack protection mechanisms are unnecessary. The reality is that Azure, or any other public cloud, is based on the same TCP networking concepts, runs the same applications and (port-based) protocols, and as such, is exposed to the same security risks that you face when protecting a physical network. Attackers do not care where your applications and data reside. They find a way onto the network, establishing a foothold by using a range of spear-phishing techniques or exploit kits. Once established, lateral movement occurs until a target or end-goal is established. The execution phase then begins. This pattern can be seen in one form or another in almost every one of the thousands of data-loss incidents over the past few years.

Image 1: The attack cycle is the same across physical, private or public cloud environments

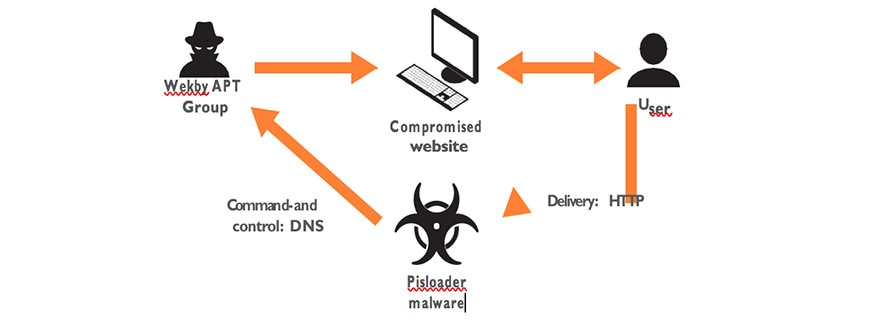

The common response to added security in the cloud is: “I only have a small presence and it’s not exposed.” Granted, the exposure may be lower, but the risk exists. A perfect example of the need for added cyberattack protection is the recent Pisloader attack, developed by the Wekby APT Group. This attack began as a run-of-the-mill attack that infected a user via a compromised website.

Image 2: The Pisloader attack uses DNS on its standard port to perform command and control

Once the user was compromised, the Pisloader malware was installed, initiating command and control by using DNS on its standard port – TCP/53. DNS is used universally on the network and in the cloud on TCP/53, so any of the basic security mechanisms would not be able to detect – much less prevent – this type of attack. Third- party, public-cloud security solutions exhibit the same weaknesses found when they are deployed on the physical network – they make their initial, positive-control network access decisions based on port by using stateful inspection; then they make a series of sequential, negative-control decisions by using bolted-on feature sets.

There are several problems with this approach:

- Ports-first or ports-only limit visibility and Using TCP and UDP ports to block certain traffic can be an effective means of limiting traffic access, but enabling an application based on ports is problematic. Solutions that focus on ports first have a limited ability to see all traffic on all ports, which means that applications running on multiple ports, or those that hop ports as an accessibility feature, may not be identified. For example, Microsoft Lync®, Active Directory®and SharePoint® use a wide range of contiguous ports, including ports 80 and 443, to function properly. This means you need to first open all those ports, exposing those same ports to other applications or cyberthreats. Only then can you try to exert application control.

- They lack any concept of unknown Unknown traffic epitomizes the 80-20 rule – it is high-risk, yet it is a small amount of traffic on every network. Unknown traffic is found on every port and can be a custom application, an unidentified commercial application, or a threat. Blocking it all, a common recommendation, may cripple your business. Allowing it all is perilous. You need to be able to systematically manage unknown traffic down to a low-risk percentage using native policy management tools, thereby reducing your security risks. Systematically managing unknown traffic means being able to find it quickly, analyze it to determine the next steps, and then take those next steps accordingly.

- Multiple policies, no policy reconciliation The sequential traffic analysis (stateful inspection, application control, IPS, AV, etc.) performed by existing solutions requires a corresponding security policy or profile, oftentimes using multiple management tools. The result is that your security policies become convoluted

as you build and manage a firewall policy with source, destination, user, port and action, and an application control policy with similar rules, in addition to other threat prevention rules. This reliance on multiple security policies that mix positive (firewall) and negative (application control, IPS, AV, etc.) control models without any policy reconciliation tools introduces potential security holes by missed or unidentified traffic.

- Cumbersome security policy update Finally, existing security solutions in the data center do not address the dynamic nature of your cloud environment and cannot adequately track policies to virtual machine additions, removals or changes.

Is Native Azure Security Sufficient?

In addition to third-party security offerings, Azure provides a range of native security services that can help customers protect their applications and data, including security group. Security groups and ACLs are port- and

IP-based controls that provide filtering capabilities within your Azure deployment. A security group is a port-based access control list and, as such, is unable to identify and control traffic at the application level. This means that you will not know the identity of the applications being allowed by a security group; you will only see the port and associated TCP or UDP service. In addition, a security group does not support any advanced threat prevention features. So in the Wekby attack described earlier, a security group could not have caught the attack, and it would have allowed the Pisloader command and control traffic.

VM-Series for Azure Summary

Whereas a security group provides some initial filtering or highly customized web-application security, the Palo Alto Networks® VM-Series for Azure allows you to protect your Azure deployment from cyberattacks. The VM-Series for Azure natively analyzes all traffic in a single pass to determine the application identity, content within and user identity. These core business elements can then be used as integral components of your Azure security policy, allowing you to:

- Identify what’s traversing your Azure With knowledge comes power. Identifying the applications in use in your Azure deployment, regardless of port, gives you unmatched visibility into your Azure environment. Armed with this knowledge, you can make more-informed security policy decisions.

- Enable applications and your Using the application as the basis for your Azure security policy allows you to create application whitelisting and segmentation policies that leverage the deny-all-else premise that a firewall is based upon; allow the applications you want in use, and then deny all others.

- Prevent advanced In order to further protect your Azure environment, you can deploy application-specific threat prevention policies that will block both known and unknown malware.

- Prevent the spread of malware within your Azure As in your private data center, the public cloud often has application tiers with some traffic contained entirely within the cloud. Without visibility and control over this east-west traffic, malware can quickly spread from an initial attack vector to other resources within the cloud.

VM-Series for Azure Licensing

The two VM-Series for Azure licensing options are the traditional, bring-your-own-license model or the pay-as-you-go model available from the Microsoft Azure Marketplace.

- BYOL: Any one of the VM-Series models, along with the associated Subscriptions and Support, are purchased via normal Palo Alto Networks channels and then deployed through your Azure management console.

- PAYG: Purchase one of two bundles that include a VM-Series license, select Subscriptions and Premium Support as an hourly subscription bundle from the Azure

- Bundle 1 contents: VM-300 firewall license, Threat Prevention Subscription (inclusive of IPS, AV, malware prevention) and English-only Premium

- Bundle 2 contents: VM-300 firewall license, Threat Prevention (inclusive of IPS, AV, malware prevention), WildFire™ threat intelligence service, URL Filtering, GlobalProtect Subscriptions and English-only Premium

Sizing Considerations for Your Azure Deployment

Establishing an Azure presence entails many of the same steps used to build out an on-premises IT infrastructure. Common steps include determining the size and volume of computing resources needed, network requirements, and software licensing options. Some of the VM-Series for Azure key sizing and implementation considerations are listed below.

- Deployment via ARM: You must deploy the VM-Series firewall in the Azure Resource Manager (ARM) mode only; the classic mode (Service Management-based deployments) is not

- Azure VMs of the following types: Standard_D3 (default), Standard_D3_v2, Standard_D4, Standard_D4_v2,

- CPU: Four or eight CPU cores to deploy the firewall; the management plane only uses one CPU core and the additional cores are assigned to the data

- Network interfaces: Up to four network interfaces (NICs); a primary interface is required for management access and up to three interfaces for data Note: In Azure, because a virtual machine does not require a network interface in each subnet, you can set up the VM-Series firewall with just two network interfaces (one for management traffic and one for data-plane traffic). To create zone-based policy rules on the firewall in addition to the management interface, you need at least two data-plane interfaces so that you can assign one data-plane interface to the trust zone, and the other to the untrust zone.

- Interface modes: Because the Azure VNet is a Layer 3 network, the VM-Series firewall in Azure supports Layer 3 interfaces

- RAM: Minimum of 4GB of memory for all models except the VM-1000-HV, which needs Any additional memory will be used by the management plane only.

- Disk space: Minimum of 40GB of virtual disk You can add additional disk space of 40GB to 8TB for logging purposes. The VM-Series firewall does not utilize the temporary disk that Azure provides.

Securely Enabling Your Hybrid Cloud in Azure

A hybrid cloud combines your existing data center (private cloud) resources, over which you have complete control, with ready-made IT infrastructure resources (e.g., compute, networking, storage, applications and services) found in IaaS or public cloud offerings, such as Azure. The private cloud component is one or more of your data centers that you have complete control over, while the public cloud component is IaaS-based and allows you to spin up fully configured computing environments on an as-needed basis.

Establishing a Connection to Azure: IPsec VPN or Azure ExpressRoute?

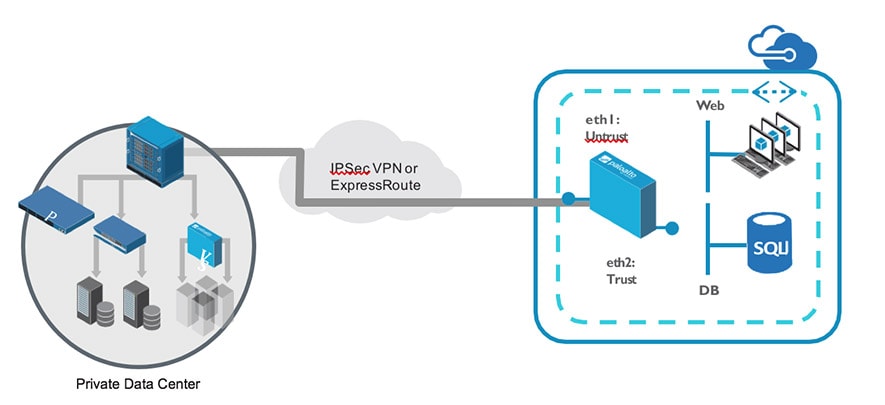

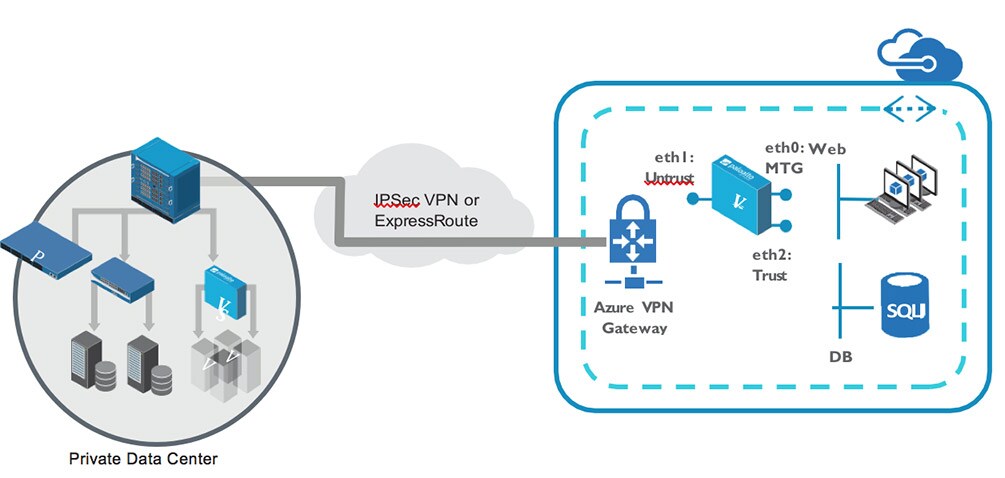

The connection between your private and public clouds should be one or more IPsec VPNs, or you can use the Azure ExpressRoute service. The Azure ExpressRoute service provides a mechanism for customers to establish a dedicated network from their own premise to Azure. This provides dedicated connectivity with the performance levels granted by the customer’s service provider. The ExpressRoute connection uses either: 1) a cross-connect at a co-located cloud exchange; 2) point-to-point Ethernet connections; or 3) IPVPN networks.

Image 3: A hybrid cloud can use either IPsec VPN or ExpressRoute

Many Azure customers prefer that the entire connection be IPsec-encrypted all the way into the VNet – even when ExpressRoute is used. This provides an extra layer of security for their network traffic. In this scenario, the hybrid cloud solution looks no different from the perspective of the VM-Series firewall than it does if the

internet is used instead of ExpressRoute. In either case, the solution is the same, including routing, redundancy, managed scale, etc. For maximum security and flexibility in a hybrid cloud architecture, IPsec tunnels terminating on the VM-Series firewall or an Azure VPN gateway directly inside the VNet is recommended, including when ExpressRoute is used. More information about this service can be found here.

Image 2 depicts a simplified version of a hybrid cloud topology. It includes an Azure virtual network (VNet) on the right side, which is a logically separated set of resources dedicated to one customer but running on a

shared infrastructure. An introduction to the Azure VNet can be found here. On the left side of the image is the existing private data center with redundant connectivity to the Azure VNet. In this example, a new, two-tiered application with database and web tiers is deployed in the public cloud. The VM-Series in the VNet is securing the traffic in and out of the VNet, as well as east-west traffic within the VNet, just as the physical firewall in the private data center might do.

Routing in Azure

Most routing in Azure is defined using user-defined routes, or UDRs. UDRs give the cloud administrator control over most aspects of routing within the VNet. An introduction to UDRs can be found here.

For the VM-Series integration in an Azure VNet, we use UDRs to pass traffic through the firewall. This ensures, for example, that traffic heading to a server flows symmetrically through the VM-Series. This also ensures that in the inter-subnet, or east-west use case, traffic initiated by, say, the web server destined to the database server also flows symmetrically through the VM-Series firewall. This allows us to not only secure traffic in and out of the VNet, but also traffic between subnets within the same VNet.

For the hybrid use case where we terminate the IPsec tunnel directly on the VM-Series firewall, the address space in your private data center can be extended to the VNet and natively routed between the environments as though they were directly adjacent. Route can be defined using static routes or can provide an extra layer of redundancy and failover using a dynamic routing protocol such as OSPF or BGP.

Public IPs and NAT

Azure will have the ability to assign multiple public IPs to a VNet instance, including our firewall. Until that feature is released, only the primary interface can have a public IP address. For the VM-Series firewall, that is our management interface. This makes managing the firewall remotely simple but does not easily allow traffic from the Internet to reach the data plane, which is eth1 of the instance (or E1/1 in PAN-OS.) So we need another mechanism for getting traffic publicly routed to the VM-Series data plane.

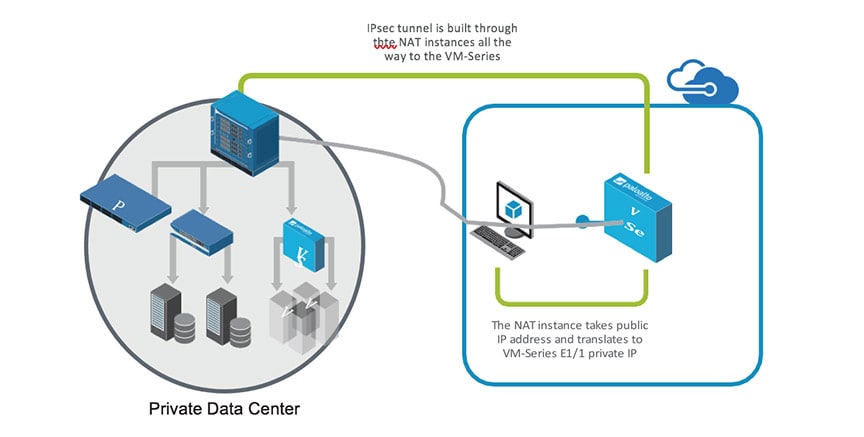

In the hybrid use case, there are two possible solutions: Use a NAT instance or use the Azure VPN gateway.

Image 4: Deploying a NAT instance to address support for multiple public IPs

Using a NAT Instance

In the case of the NAT instance, we require a worker node, or basic Linux® instance that takes all traffic on its primary instance and NATs it to the secondary (E1/1) interface of the VM-Series. An IPsec tunnel would then be setup to terminate through the NAT instance terminating on the VM-Series itself, as shown in image 4.

For redundancy, we would have two firewalls, each with its own NAT instance and two IPsec tunnels – one per firewall. Then we would use a dynamic routing protocol, such as OSPF, to create automatic failover in case of an issue and we could even add a combination of ECMP and SNAT to share the traffic across both firewalls.

The NAT instance itself uses IP tables to create the NAT rule. The drawback is that this requires an additional device requiring monitoring and maintenance; however, this is a short-term solution pending multiple public IPs per instance in Azure and lets customers try out the use case right away.

Image 5: The VM-Series fully deployed to protect a hybrid cloud

Azure VPN Gateway

The other option for getting traffic to the VM-Series firewall is to instead deploy the Azure VPN gateway in front of the VM-Series firewall(s) and terminate the IPsec tunnel on the gateway instead of the VM-Series itself. This alleviates the problem of having only one public IP per instance and looks like the following:

This works well to solve the issue of limited public IPs as the VM-Series doesn’t require a public IP. The VM-Series will then have a route (static or dynamic) for the private cloud pointing towards the VPN gateway and the data center IPsec termination point (i.e., physical firewall) will have routes for the web and DB subnets that points to the tunnel.

Putting It All Together

Regardless of how you terminate the connection between the private data center and the Azure VNet, the hybrid cloud use case gives you balance between full control over your own data center and a place to run legacy applications, plus all the advantages offered by the public cloud. All of this without needing to sacrifice the same level of security and control you’ve come to expect in your own data center.

In addition, Panorama® network security management can be used optionally to not only manage your physical, on-premise Palo Alto Networks firewalls, but also the VM-Series firewall in the Azure VNet. The VM-Series firewalls have the same user interface and API and are fully supported via Panorama. Panorama can also be used as a central point of visibility when used as a log aggregation system. To learn more about the VM-Series for Azure, visit https://azure.paloaltonetworks.com.