Having joined Palo Alto Networks following a 35-year career in the U.S. military, the past decade of which I served in a variety of leadership positions in cyber operations, strategy and policy, I have found that many of the cybersecurity challenges we face from a national security perspective are the same in the broader international business world.

This blog post series describes what I consider to be four major imperatives for cybersecurity success in the digital age, regardless of whether your organization is a part of the public or private sector.

In my first two blogs, I covered Imperatives #1 and #2. Here are the major themes for each imperative:

- Imperative #1 – We must flip the scales (published February 16, 2016)

- Imperative #2 – We must broaden our focus to sharpen our actions (March 12, 2016)

- Imperative #3 – We must change our approach

- Imperative #4 – We must work together

Imperative #3 – WE MUST CHANGE OUR APPROACH

Before I get to the details, allow me to review some background and context, and then provide an executive summary of Imperative #3 in case the reader is pressed for time.

As a reminder from my previous two blogs, I use the four factors in Figure 1 to explain the concept behind Imperative #3 in a comprehensive way.

Figure 1

- Threat: This factor describes how the cyberthreat is evolving and how we are responding to those changes.

- Policy and Strategy: Given our assessment of the overall environment, this factor describes what we should be doing and our strategy to align means (resources and capabilities – or the what) and ways (methods, priorities and concepts of operations – or the how) to achieve ends (goals and objectives – or the why).

- Structure: This factor includes both organizational (human dimension) and architectural (technical dimension) aspects.

- Tactics, Techniques and Procedures (TTP): This factor represents the tactical aspects of how we actually implement change where the rubber meets the road.

In this third blog of the series, I’d like to describe Imperative #3 using the concept model outlined above and step through the implications.

Figure 2

EXECUTIVE SUMMARY:

What we have been doing in the past simply isn’t working. We MUST change our approach!

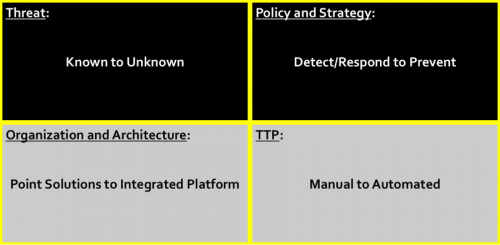

The first change in approach is to move away from a focus on known threat signatures toward a focus on suspicious activity in order to make unknown threat signatures known as rapidly as possible. We must scale the discovery of indicators of compromise through automation, determine in near real time that there are malicious techniques being employed, produce mitigations for those bad techniques, and automatically push them out to adjust the security posture of our networks and devices. Doing so imposes increasing costs on adversaries because it forces them to change their entire breach playbooks.

Next we must change our policies and strategies, recognizing that detection and response, while critical cybersecurity functions, are insufficient to resolve the problem on their own. It takes a strong prevention mindset, policies driven by the organization’s leadership, and accompanying strategies that align resources and methods to achieve the desired results.

An effective prevention-first policy means we must also change our approach by evolving from a legacy view that success is a result of bolting onto an organization’s network enterprise a bunch of independent point solutions to a more natively integrated platform approach. The point solution approach looks at different “pieces” of the attack lifecycle and creates an enormous volume of alerts – mostly false positives – causing lots of work. None of the point solutions are natively integrated to communicate with each other to put the whole threat picture together, so that takes lots of equipment, bandwidth, time, people and money. Next-generation technology allows you to look at the entire lifecycle process as a whole in a single pass by design, and leverages automation to alert only on threat playbooks and block them across the network enterprise.

Finally, at the procedural and technique level, there is magic in leveraging automation in ways we have not thus far. Traditionally, in a “detect and respond” approach, we measure our “incident response” in days, weeks and months because we rely almost exclusively on human decision-making and manual action. We must change our approach so that we leverage automation and save what only humans must, or are best suited to do for the slower, manual procedures. Automation in an integrated platform approach reduces your workload, gives you better visibility of potential attackers, and safely enables your organization to do its critical business functions.

DETAILED DESCRIPTION OF IMPERATIVE 3:

THREAT

So here’s the first important change in approach. We have to move from focusing on known threats and signatures to an approach that focuses on UNKNOWN threats … or at least making unknown threats known as quickly as possible (hopefully in near real time) so that we can then do something about them.

We have a problem that is giving today’s cyberthreats a significant advantage over our ability to secure and defend our networks. This problem pits a growing adversary marketplace that leverages information sharing, automation and the cloud at increasing speed and decreasing costs against an oftentimes slow, clumsy, manual and increasingly expensive cybersecurity community. Here’s where we can begin to get ahead and increase the cost to cyber adversaries.

If we can begin to scale the discovery of indicators of compromise through automation, determine that there are “bad” techniques being employed in near real time, produce mitigations for those bad techniques, and automatically push them out to adjust the security posture of our networks and devices, then we can begin to make a dent in adversaries’ agility, flexibility and scale.

Because remember, making an unknown threat known in a matter of minutes still means that something got through before it was discovered; but, if you can do what I just said in near real time, then because of the attack lifecycle process we know that it usually takes more than a few minutes to get through all the steps of an adversary playbook to achieve the final goal. The lifecycle process requires almost any cyber actor to first gather information and perform reconnaissance, next conduct the initial compromise, then lay down an exploit or inject malware, establish command/control, apply privileged movement through the network to get to the right location, and finally to achieve their objective, whether it be the exfiltration of information, disruption, deception or destruction.

This takes time – usually at least hours, but it can take days, weeks and even months depending on how stealthy a cyber actor wants to be.

So focusing on turning unknowns into knowns as quickly as possible forces the adversaries to change their entire playbooks at a rate that begins to put them on the wrong side of the problem.

POLICY and STRATEGY

I believe this next point is critical if we want to deal effectively with something that not only threatens our national security but also threatens our international stability and economic vitality. As a community we have traditionally taken an approach that focuses on detection and response. I’m not making light of those functions (detection and response) because they are, no doubt, vital. But they are insufficient. We are never going to get ahead of the problem, if we don’t change our approach to focus first on prevention, while also having good detection, response and resilience in place.

I believe this imperative starts at the leadership level of any organization; and, therefore, I include it as a policy category. Once leadership buys in, the challenge is to turn a policy of prevention into a viable strategy and align any organization’s limited resources with effective methods to achieve our goals and objectives.

ARCHITECTURE

If you accept the change in approach to focus on prevention in addition to detection and response, how do you make that happen? Is it possible? I wouldn’t be in this job if I didn’t believe that not only is it possible but this imperative for change is driving the way our technology works at Palo Alto Networks right now!

And here’s the key to success from an architecture point of view: we must change our approach away from a legacy set of isolated point solutions randomly placed throughout the network architecture and normally installed in a “bolted on” model. In this model network security solutions don’t talk to each other and don’t effectively integrate with one another without a considerable amount of complexity, friction, bandwidth, energy and time consuming procedures, so the hope of stopping threats based on an endless number of known signatures usually means you’re always in the business of cleaning up the disaster instead of preventing it in the first place.

Let me paint a picture for you about this point that our Regional CSO for Europe and the Middle East, Greg Day, developed. It’s very easy and low cost for an attacker to change just that one characteristic of a signature and reuse all the rest of a threat playbook. If we use a picture of an adversary’s face as an example, then this is the equivalent of changing that person’s eye color so you don’t recognize the face anymore.

Breaches, intrusions and attacks happen at CPU speed, so this “picture of a face” is typically changing thousands of times in a minute. You need to be able to recognize the whole face. If you can do that, then even if the threat changes one characteristic of the lifecycle or breach playbook, you can still spot the adversary by recognizing the whole face and blocking the person.

If you can do that, then it’s very expensive and hard work for attackers to change their approach because they would have to change all the characteristics of their entire face (all the stages of their playbook for a breach). That’s hard for them and expensive. So they are likely to go try somewhere else where their entire breach playbook or lifecycle is not blocked.

But this is typically what happens now, using various point solutions for the different facial features (sticking with the face example). So let’s say you have a threat with multiple stages of a breach playbook (a face with multiple features). And we have all these defenses, which are different tools designed to detect different aspects of a breach. These point solutions include legacy firewalls, URL filtering, antivirus, IPS, sandboxing, IDS, SSL decryption, and many more.

The different tools generate hundreds or even thousands of alerts. Most of them are false positives. Think about all the analysis you must employ to sift through them. This high workload exists because different parts of this security stack all produce alerts independently from each other.

There is a better approach in which all of the detection capability is built into a single platform that is designed from the start to work together, not retrofitted. This approach looks at the big picture – all the characteristics at once. This approach also “self-learns” in near real-time.

Here’s what I mean by being designed to work together. In the point solution model, the information about a suspected cyberthreat goes to the first component (say AV) and is unpacked so that it can be inspected and a response, created. After it’s unpacked, the unpacked version is discarded and the package is passed on to the next component (say URL filtering), unpacked and discarded…and again…and again.

In the first place, doing this unpacking many times is expensive because it is resource hungry and it’s been done over and over again. You need a lot of hardware to support this approach.

Secondly, it’s very difficult to manually correlate the results from each of the inspections to look at the context of what you have found – to form the big picture. Incidentally, 30 percent of traffic coming into networks today is encrypted, which makes the unpacking process even harder. This manual multi-pass approach is slow.

We must change from this approach to an “integrated platform” approach. The key is to natively integrate the approach so that network security, endpoint device security, and the analytical backbone to feed the network and endpoint security with near the real time discovery of previously unknown threat signatures enables an organization to actually prevent adversaries from stepping through the attack lifecycle process and completing their intended objective. This is possible with an integrated platform approach.

That means the unpacking happens once, and all the cyber breach characteristics are searched for afterward. That means it’s automated and fast. Instead of alerting every time a suspicious characteristic is found, it looks at the big picture, only alerting when there is high confidence that an actual breach is taking place – when there is high confidence that there is a match to the entire face instead of just one of many facial features.

As well as alerting you, it blocks all the combinations of what your adversary is trying to do. It does this by self-learning and reprogramming itself. It can only do this because of the speed at which it operates. The result is fewer alerts to deal with, better visibility of what is happening, and automated blocking or prevention actions taking place.

TTP

Down where the rubber meets the road at the tactical, procedural level, here’s the other key to successfully moving from a legacy to a “next generation” approach. We are taking a page from the threat itself with this imperative for change. Today’s advanced threats, even the modestly advanced ones, don’t operate at manual speed. They operate at the speed of automation.

The threat is always going to beat our manual efforts to defend when they leverage automation and cloud to come at us in “nth degree” permutations of code adjustments (like changing different facial features thousands of times a minute), and that’s exactly what they do. We have to do the same thing to gain an advantage over the threat and change our approach from a procedural point of view.

Traditionally, in a “detect and respond” approach, we measure our “incident response” in days, weeks and even months because we rely almost exclusively on human decision-making and manual action. However, when it comes to the issue of human-based, manual action versus leveraging the power and scale of automation, we must change our approach so that we leverage automation and save what only humans must or are best suited to do for the slower, manual procedures.

Here, cloud capabilities are vital. This can raise concerns regarding a number of issues, so we believe that you have to allow for different types of cloud options, such as true cloud versus on-premises cloud capabilities.

So how do you make sure that this model can scale to whatever shape and size your organization will grow to? Remember, current organizations take different products from different vendors to look for the different characteristics that adversaries might use. And they try to integrate these in some way, mostly using manual procedures and people to look at the different alerts. That’s labor- and resource-intensive and focused on detecting and responding after they have been breached.

The use of automated procedures and techniques in an integrated platform approach can reduce your workload and give you better visibility of potential attackers. This is the capability of next-generation technology and helps an organization to securely manage traffic coming into the network, so that critical business functions can go on uninterrupted. This is a big step toward prevention and state-of-the-art cybersecurity.

CONCLUSION:

Imperative #3 is about changing our approach:

- From a threat focus on known signatures to suspicious techniques and making unknown signatures known rapidly.

- From a policy and strategy focus on detection and response to prevention first.

- From an architectural structure focus on retrofitted legacy point solutions that don’t communicate effectively or efficiently to a natively integrated platform.

- From a procedure/technique focus on human-based manual action to automated action.

Taken together, these four different factors represent an imperative for future change if we want our cybersecurity efforts to be successful in the digital age, so that we can continue to place our trust in the digital environment while more effectively managing the growing risks associated with that same environment.

The final blog in this series will be about the tremendous advantage that the cybersecurity community can gain by leveraging a strong team approach and building effective partnerships because, in today’s advanced threat environment, you simply cannot go it alone and be successful.

Written by John A. Davis, Major General (Retired) United States Army, and Vice President and Federal Chief Security Officer (CSO) for Palo Alto Networks