This post is also available in: 日本語 (Japanese)

Imagine you are working on a confidential contract, feeling uncertain about the foreign language and writing. Then, you find a powerful AI tool that can improve your writing to perfection. You eagerly send your contract to the AI, and it delivers as promised. However, you later realize that your confidential document was fed into the AI model and could potentially be reviewed by AI trainers. Even worse, it is possible that your contract might be used to train the model and appear in other users' outputs. How would you react? The dilemma of usability and the security of AI tools is becoming a real concern since ChatGPT was released.

Developed by OpenAI, ChatGPT is an artificial intelligence chatbot that was built on OpenAI's GPT-3.5 and the recent GPT-4 models. With over 100 million monthly active users, ChatGPT has become the most buzz worthy AI product on the internet.

Here are some of ChatGPT’s capabilities: natural language generation, answering questions, sentiment analysis, translation, content creation and so on. However, despite its impressive abilities in natural language processing (NLP), ChatGPT has also raised many concerns regarding plagiarism, privacy and data leakage.

The Security Concerns of ChatGPT

In March, Italy temporarily banned ChatGPT amid concerns that the artificial intelligence tool violated the country's policies on data collection. In the meantime, companies like Amazon and Walmart have taken action. They have warned employees to take care in using generative AI services: do not share information with AI-systems like ChatGPT, and do not share code with the AI chatbot. In fact, Samsung employees accidentally leaked trade secret data via ChatGPT. Based on the ChatGPT abilities mentioned above, it could raise security concerns in several ways. It could help attackers write malicious code with various obfuscations embedded. It can augment the content for social engineering attacks, so attackers can use the ChatGPT to produce convincing phishing content. Last but not least, there is also the potential security risk of data leakage since the people might submit sensitive data into the model, and this information might be retrieved later.

According to a recent blog post by Unit 42 researchers, ChatGPT-themed scam attacks are on the rise. Between November 2022 through early April 2023, they noticed a 910% increase in monthly registrations for domains related to ChatGPT and a 17,818% growth of related squatting domains from DNS Security logs. The researchers presented several case studies to illustrate the various methods scammers use to entice users into downloading malware or sharing sensitive information. They highlighted the potential dangers of using copycat chatbots and encourage ChatGPT users to approach such chatbots with a defensive mindset. The Advanced URL Filtering, DNS Security and WildFire subscriptions have addressed this issue.

App-IDs Related to OpenAI

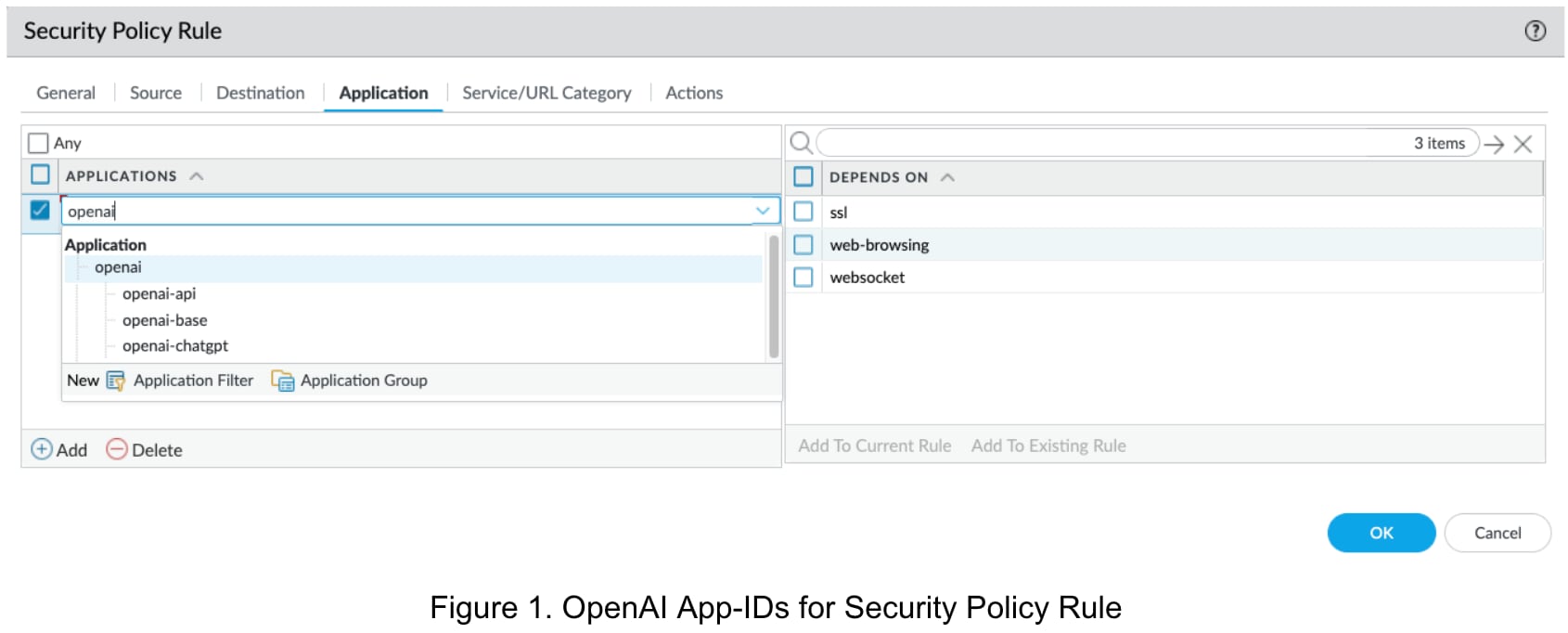

Palo Alto Networks diligently monitors the latest AI trends and actively assesses potential threats associated with them. To increase the visibility of OpenAI-related traffic and help our customers manage and control ChatGPT usage with our Next-Generation Firewalls (NGFWs), we released three OpenAI-related App-IDs in April: openai-base, openai-chatgpt and openai-api.

openai-base: Covers the general traffic of OpenAI, except for ChatGPT. This App-ID will cover network traffic related to OpenAI research, developers' tutorial and documentation, company information and products (such as DALL·E). It will also show as openai-base in NGFWs' traffic log on the OpenAI website.

openai-chatgpt: Covers the traffic of a ChatGPT web-based interface, which is a more general way to use ChatGPT among casual users.

openai-api: Covers the traffic of all API-related traffic of OpenAI, not only ChatGPT, but other features like image generation. OpenAI provides APIs to enable the access to AI models programmatically. The AI models that support ChatGPT, like GPT-3 and GPT-4, can be accessed through OpenAI APIs, which is a more general way to use ChatGPT among developers.

To simplify the process of OpenAI traffic management, an App-ID container openai that contains the above three App-IDs was released together, as shown in figure 1.

Managing OpenAI Traffic Through Security Policies

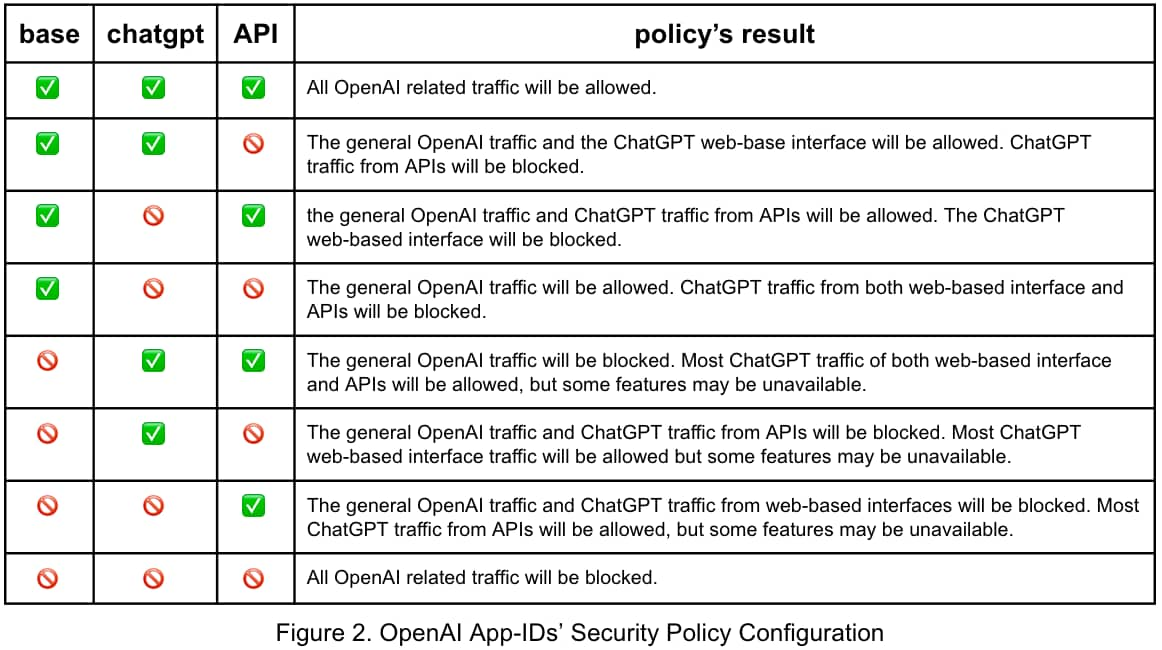

The three OpenAI App-IDs enable Palo Alto Networks NGFW customers to flexibly control and manage the accessibility of ChatGPT and give clear visibility of OpenAI traffic, generated from different interfaces. The three App-IDs are identified separately, so simply blocking openai-base cannot block openai-chatgpt or openai-api.

![]()

The security policies configuration for OpenAI traffic management through the three App-IDs show different scenarios. In figure 2, where ✅ means “allow” in the security policy rule, and means “deny.”

We would like to note that if you want to allow an App-ID, it "depends on" App-IDs should also be allowed to make the application fully functional. For example, the openai-base App-ID depends on SSL, web-browsing and WebSocket. These three App-IDs need to be allowed together with openai-base. Similarly, if you want to allow openai-chatgpt or openai-api, openai-base (including its three "depends on'' App-IDs) should be allowed to obtain full access.

New Challenges from AI

The increasingly popular ChatGPT AI application introduces new challenges and threats to today's digital landscape. Equipped with the three OpenAI App-IDs, Palo Alto Networks NGFW empowers customers to control and manage ChatGPT usage and access with flexibility. These App-IDs also grant enhanced visibility of ChatGPT utilization for enterprise network administrators. This enhances enterprise network security and mitigates potential data breaches. Enterprise DLP also helps to prevent data exfiltration to ChatGPT.

There are and will be more and more large language model based chatbots like ChatGPT, and we will help customers to address those newer concerns in the future, too.

Learn more about the OpenAI-related App-IDs on Palo Alto Networks Application Research Center.