How do you secure Kubernetes?

Part of the answer is to identify and address security risks within the various components of Kubernetes itself as well as their configurations. You can do this by, for example, validating kubelet configurations and control plane encryption practices.

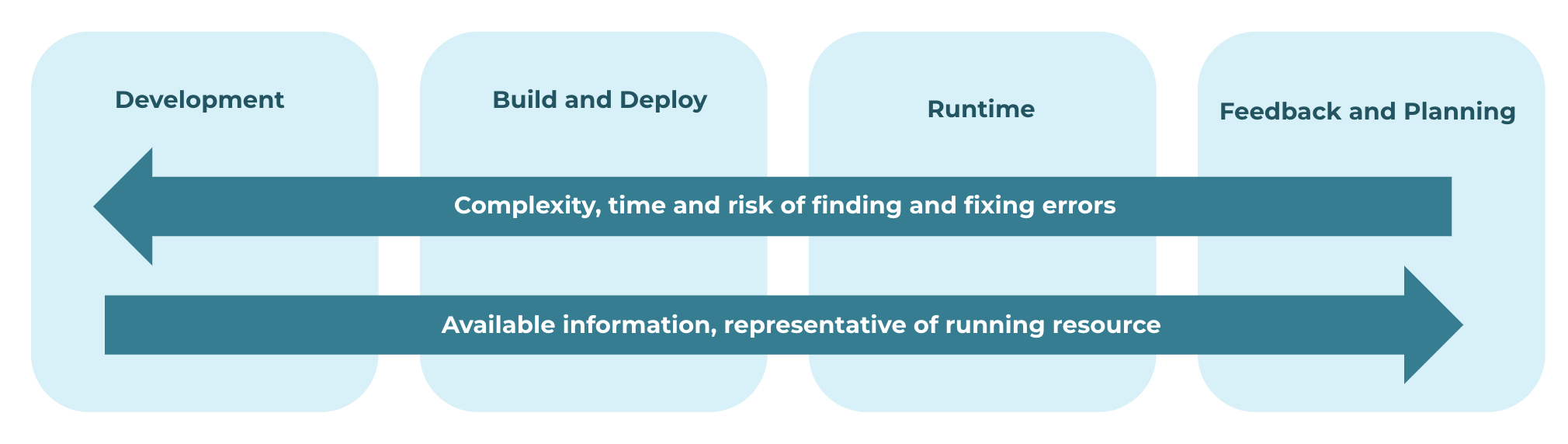

But the other part of the key to Kubernetes security — the one that is easier to overlook — is the DevOps lifecycle. Kubernetes doesn’t exist in a vacuum. In most cases, Kubernetes leverages infrastructure as code (IaC) and is part of a continuous integration/continuous delivery (CI/CD) pipeline that DevOps teams use to deliver software. To secure Kubernetes, then, you need to secure the code layer, the entire delivery pipeline that feeds into it and all the elements at each phase.

This article discusses how to do that by explaining how to manage Kubernetes security risks at each stage of the DevOps lifecycle—from code and build to deploy and runtime. As we’ll see, the key to holistic Kubernetes security is to ensure that teams are able to identify risks at the various stages of the DevOps lifecycle that could later turn into risks within a Kubernetes production environment.

Developing Secure Kubernetes Code

Let’s start with the first stage of any development lifecycle: code development. This is the stage during which developers write code for applications and infrastructure that will later be deployed into Kubernetes.

You'll want to remain aware of three main types of security flaws when developing Kubernetes infrastructure:

- Misconfigurations within infrastructure-as-code (IaC) and container configuration

- Vulnerabilities in container images

- Secrets exposure

You can check for misconfigurations using IaC scanners such as our own Checkov, which you can deploy from the command line or via IDE extensions. IaC scanners validate your IaC configuration files by detecting oversights or errors that may lead to insecure configurations when the files are applied.

Meanwhile, container scanning can check for vulnerabilities inside container images. You can run scanners on individual images directly from the command line; however, most container registries also feature built-in scanners. The major limitation of container image scanning tools is that they can only detect known vulnerabilities—meaning those that have been discovered and recorded in public vulnerability databases.

When scanning container images, keep in mind that container scanners designed to validate the security of container images alone may not be capable of detecting risks that are external to container images. That’s why you should also be sure to scan Kubernetes manifests and any other files that are associated with your containers. Along similar lines, if you produce Helm charts as part of your build process, you’ll need security scanning tools designed to scan Helm charts specifically.

In addition to avoiding misconfigurations and vulnerabilities, it’s crucial to avoid hard coding secrets (like passwords or API keys) that threat actors can leverage to gain privileged access. Secrets scanning tools are the key to checking for sensitive data like passwords and access keys inside source code. They can also be used to check for secrets data in configuration files that developers write to govern how their application will behave once it is compiled and deployed.

The key to getting feedback in this stage is to integrate with developers’ local tools and workflows—either via integrated development environments (IDEs) or command line interface (CLI) tools. As code gets integrated into shared repositories, it’s also important to have guardrails in place that allow for team-wide feedback to collaboratively address.

Kubernetes at Build and Deploy

After code is written for new features or updates, it gets passed on to the build and deploy stages. This is where code is compiled, packaged and tested.

Security risks like over-privileged containers, insecure RBAC policies, or insecure networking configurations can arise when you deploy applications into production. These insecure configurations may be baked into the binaries themselves, but they could also originate from Kubernetes that are created alongside the binaries in order to prepare the binaries for the deploy stage.

Due to the risk of introducing new security problems while making changes like these, it’s a best practice to run a final set of scans on both your application and any configuration or infrastructure-as-code files used to deploy it just before you actually deploy. This is your last chance to find and fix security issues before they affect users in production. Don’t squander it! Running a final set of scans may delay the deployment slightly, but a small delay is much better than having to pull the application from production because you discover a security issue after the deployment.

Keeping the Kubernetes Runtime Secure

Once your app has been deployed into production, it enters the final stage of the CI/CD pipeline: runtime. If you’ve done the proper vetting during earlier stages of the DevOps lifecycle, you can be pretty confident that your runtime environment is secure.

However, there’s never a guarantee that unforeseen vulnerabilities won’t arise within a runtime environment. There is also the risk that developers or IT engineers could change configurations within a live production environment. That’s why it’s important to scan production environments continuously in order to detect updates to Security Contexts, Network Policies and other configuration data. You want to know as soon as possible if someone on your team makes a change that creates a security vulnerability – or, worse, if attackers who have found a way to access your cluster are making changes in an effort to escalate the attack.

On top of scanning configuration rules, you should also take steps to secure the Kubernetes runtime environment by hardening and monitoring the nodes that host your cluster. Kernel-level security frameworks like AppArmor and SELinux can reduce the risk of successful attacks that exploit a vulnerability within the operating systems running on nodes. Monitoring operating system logs and processes can also allow you to detect signs of a breach from within the OS. Security Information and Event Management (SIEM) and Security Orchestration, Automation and Response (SOAR) platforms are helpful for monitoring your runtime environment.

The Feedback and Planning Stages

Although the DevOps pipeline ends with runtime, it’s common to think of the lifecycle as a continuous loop, in the sense that data collected from runtime environments should be used to inform the next round of application updates.

From a security perspective, this means that you should carefully log data about security issues that arise within production – or, for that matter, at any earlier stage of the lifecycle – and then find ways to prevent similar issues from recurring in the future.

For instance, if you determine that developers are making configuration changes between the testing and deployment stages that lead to unforeseen security risks, you may want to establish rules for your team that prevent these changes. Or, if you need a more aggressive stance, you can use access controls to restrict who is able to modify application deployments. The fewer people who have the ability to modify configuration data, the lower the risk of changes that introduce vulnerabilities.

···

Taking steps to secure Kubernetes is great. But no Kubernetes environment will be very secure if the code you deploy into it contains security risks.

That’s why it’s critical to identify and address vulnerabilities at every stage of the DevOps lifecycle that feeds into Kubernetes. From the moment your developers write new source code, through to the operation of that code in a runtime environment, you should be continuously scanning and validating the security of your application itself, as well as the configuration files and dependencies that govern it.

Interested in taking a deeper dive into DevSecOps for Kubernetes environments? Download our DevSecGuide to Kubernetes!