-

- Post-Quantum Cryptography Explained

- The Quantum Threat to Modern Encryption

- How Post-Quantum Cryptography Works

- Standardized Algorithms: NIST FIPS 203, 204, and 205

- Preparing for the Post-Quantum Transition

- PQC Challenges and Implementation Pitfalls

- How Can Organizations Prepare for PQC?

- Post-Quantum Cryptography FAQs

Table of contents

-

What Is Quantum Security? Preparing for the Post-Quantum Era

- What does the industry really mean by “quantum security”?

- Why won't today's encryption hold up against quantum computers?

- What is post-quantum cryptography, and why is it relevant?

- Where do QKD and QRNG fit into quantum security?

- Why is quantum security so challenging to put in place?

- How are organizations getting quantum ready today?

- Is the quantum threat imminent — or still years away?

- Quantum security FAQs

-

8 Quantum Computing Cybersecurity Risks [+ Protection Tips]

- Quantum Computing’s Risk to Cybersecurity Explained

- 8 Quantum Computing Threats to Cybersecurity

- Quantum Threat and Readiness Timeline

- How Organizations Can Prepare for Quantum Cybersecurity Risks

- Consequences of Failing to Prepare Before Q-Day

- Quantum Computing Cybersecurity Risk Examples

- Quantum Computing’s Threats to Cybersecurity FAQs

What Is Post-Quantum Cryptography (PQC)? A Complete Guide

5 min. read

Table of contents

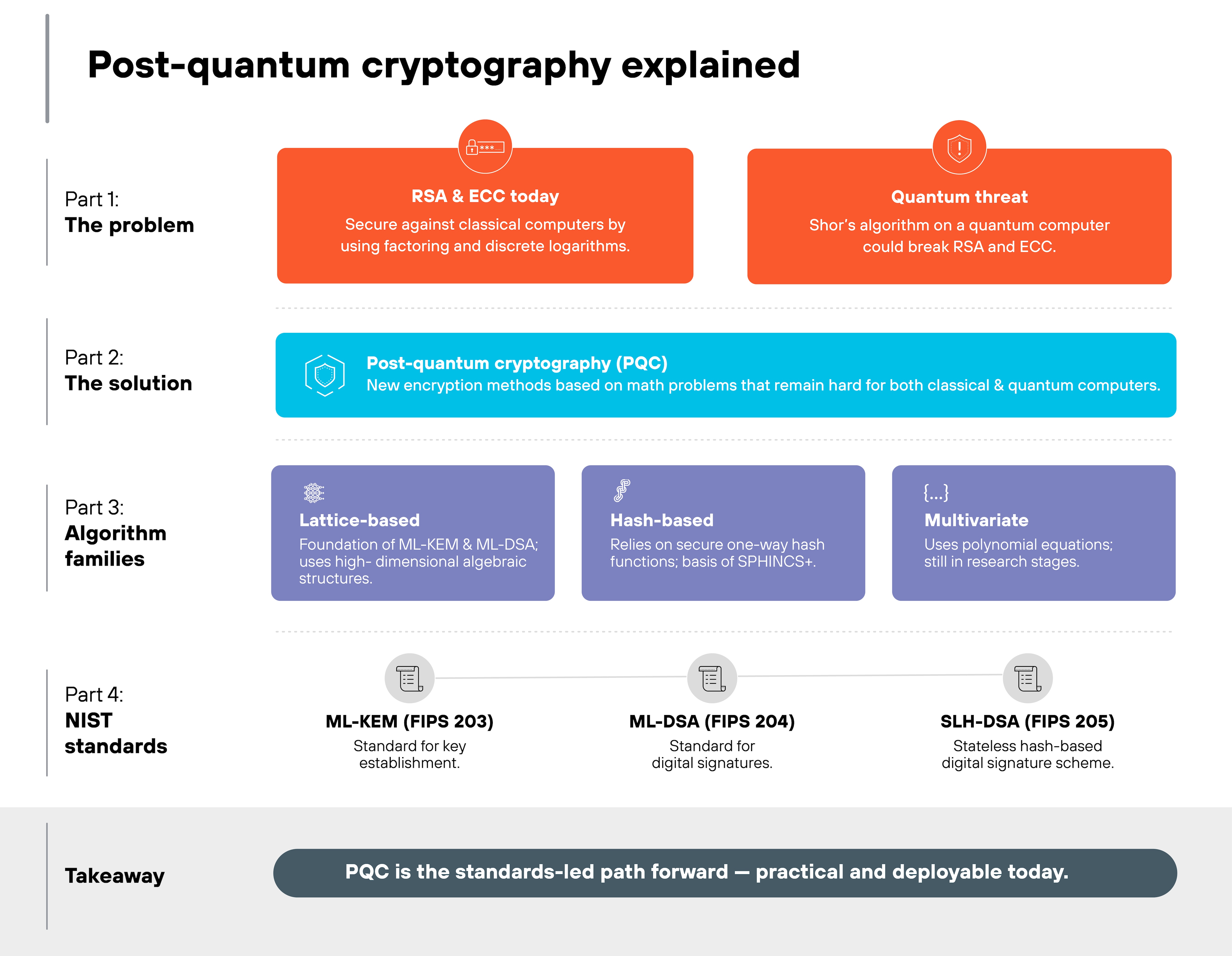

Post-quantum cryptography (PQC) refers to mathematical algorithms designed to be secure against the computational capabilities of a cryptographically relevant quantum computer. While current encryption standards like RSA and ECC rely on the difficulty of factoring large integers or discrete logarithms, tasks a quantum computer could solve in hours, PQC utilizes alternative mathematical problems, such as lattice-based or hash-based structures, that remain computationally "hard" even for quantum processors.

Key Points

-

Quantum Resistance: PQC utilizes mathematical foundations, including lattices and codes, that are inherently resistant to Shor’s and Grover’s algorithms. -

Algorithm Standardization: The National Institute of Standards and Technology (NIST) has finalized standards like ML-KEM and ML-DSA to replace vulnerable classical methods. -

Harvest Now, Decrypt Later: Adversaries are currently collecting encrypted data with the intent to decrypt it once powerful quantum computers become available. -

Crypto-Agility: Organizations must adopt flexible security architectures that allow for the seamless replacement of cryptographic primitives without re-engineering entire systems. -

Legacy Vulnerability: Most existing public-key infrastructure (PKI) used for secure web browsing and financial transactions is fundamentally broken by quantum logic.

Post-Quantum Cryptography Explained

Post-quantum cryptography (PQC) marks a transformative evolution in securing digital trust. While traditional cryptographic methods rely on complex number-theoretic challenges, PQC leverages quantum-resistant mathematical fields, such as hash-based signatures, multivariate equations, and lattice-based cryptography.

The drive for PQC adoption is fueled by the anticipated emergence of a Cryptographically Relevant Quantum Computer (CRQC). Such a machine could employ Shor’s algorithm to compromise nearly all current asymmetric encryption standards.

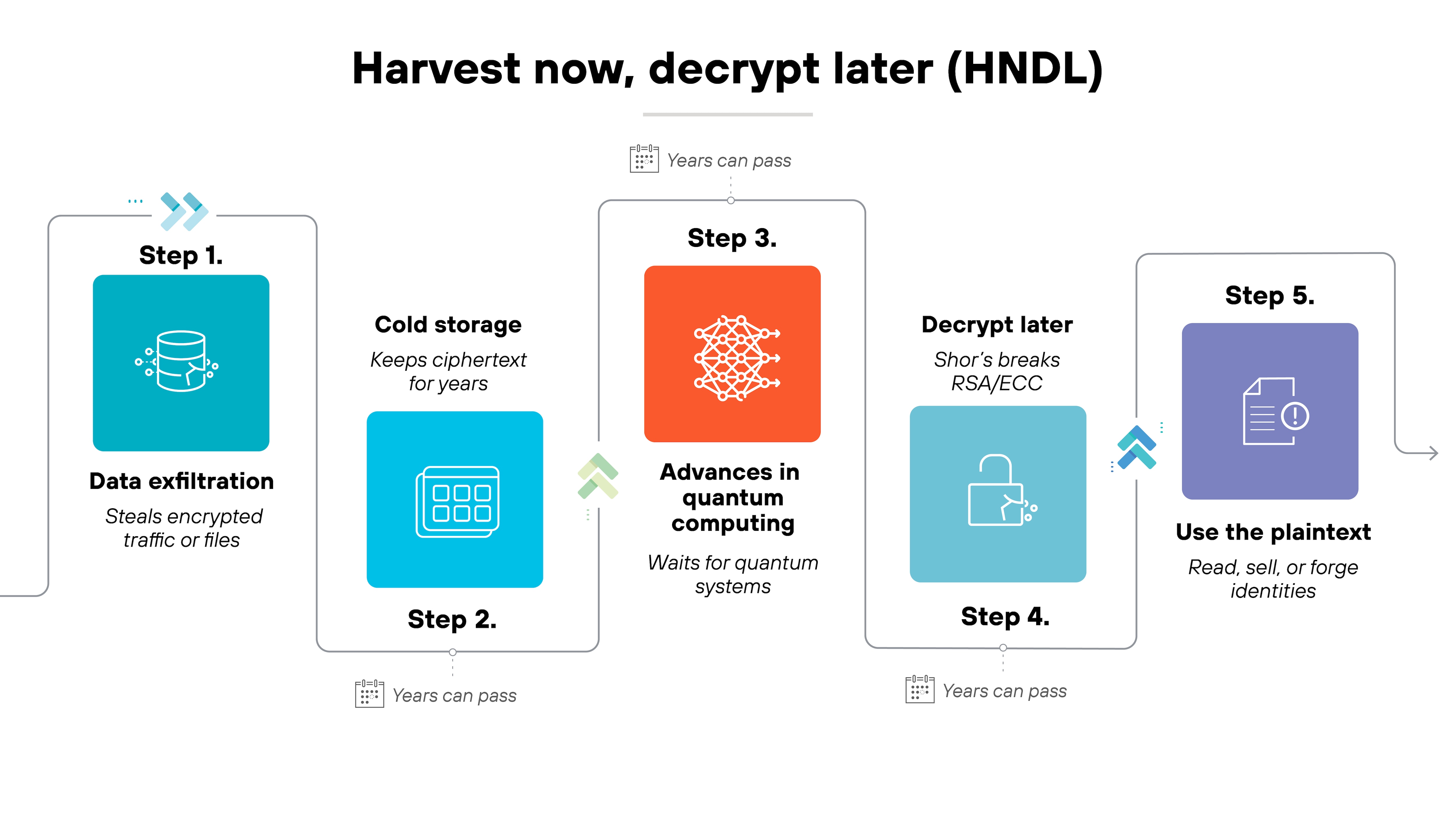

This looming capability creates an immediate "Harvest Now, Decrypt Later" (HNDL) threat, where adversaries intercept and store encrypted traffic today to unlock it once quantum technology matures. This poses a critical risk for high-value data with long-term sensitivity, including intellectual property and national security information.

In response, NIST has spearheaded a global initiative to standardize PQC algorithms, providing a framework for resilient communication in a post-quantum environment. Transitioning to these standards involves more than technical updates; it requires "crypto-agility," enabling organizations to dynamically update cryptographic defenses across extensive digital infrastructures.

"To stave off attacks by a quantum computer — if and when a cryptographically relevant one is built — the worldwide community must retire current encryption algorithms. Post-quantum encryption algorithms must be based on math problems that would be difficult for both conventional and quantum computers to solve."

- NIST, What Is Post-Quantum Cryptography?

The Quantum Threat to Modern Encryption

The security of the modern internet relies almost entirely on three mathematical problems: integer factorization, discrete logarithms, and elliptic curve discrete logarithms. These quantum security problems form the backbone of RSA, Diffie-Hellman, and ECDSA.

Comparison: Classical vs. post-quantum cryptography

| Aspect | Classical public-key cryptography | Post-quantum cryptography (PQC) |

|---|---|---|

| Mathematical foundation | Based on factoring (RSA) and discrete logarithms (ECC, Diffie–Hellman) | Based on problems like lattices, hash chains, error-correcting codes, and multivariate equations |

| Vulnerability to quantum attacks | Breakable by Shor's algorithm on large-scale quantum computers | Designed to resist both classical and quantum attacks |

| Hardware requirements | Runs on classical computers | Runs on classical computers — no quantum hardware needed |

| Security assumption | Computational difficulty for classical systems | Hardness believed to hold against classical and quantum attacks |

| Examples | RSA, ECDSA, Diffie–Hellman | ML-KEM (KEM), ML-DSA (signatures), SLH-DSA (signatures), Classic McEliece (KEM). |

| Readiness for deployment | Already in use globally but becoming obsolete under quantum threat | NIST standards finalized and ready for phased deployment |

Understanding Shor's and Grover's Algorithms

Shor's algorithm is a quantum algorithm that can find the prime factors of an integer in polynomial time. This effectively renders RSA and ECC encryption useless once a quantum computer reaches sufficient qubit stability and scale.

Grover's algorithm, while less catastrophic, speeds up attacks against symmetric encryption like AES. To maintain current security levels against Grover's algorithm, experts recommend doubling bit lengths, moving from AES-128 to AES-256.

The "Harvest Now, Decrypt Later" (HNDL) Strategy

Adversaries are currently executing HNDL attacks by intercepting and storing encrypted communications. They are gambling on the "Q-Day" timeline—the moment a quantum computer can break standard encryption. If your data must remain confidential for 10, 15, or 20 years, it is already vulnerable to future quantum decryption.

How Post-Quantum Cryptography Works

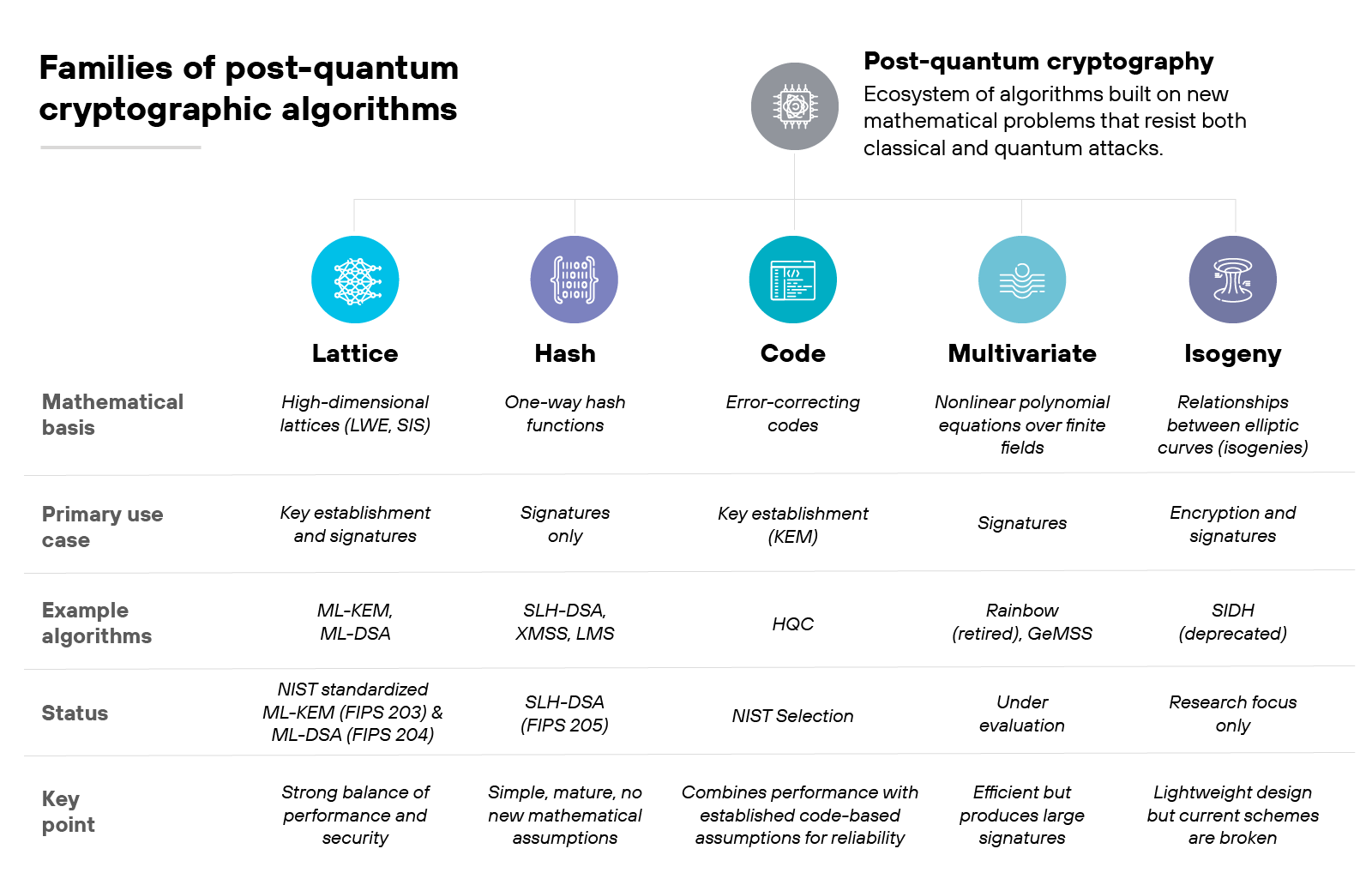

PQC shifts the cryptographic foundation to mathematical problems that are difficult for both classical and quantum computers to solve.

Lattice-Based Cryptography

Lattice-based systems involve finding the shortest vector in a high-dimensional grid. This problem is exceptionally complex for quantum computers to "shortcut." It is currently the most promising branch of PQC due to its efficiency and versatility in both encryption and digital signatures.

Hash-Based and Code-Based Signatures

Hash-based signatures rely on the security of cryptographic hash functions. Because hash functions are already relatively resistant to quantum attacks, these methods are considered highly reliable and "conservative" choices for long-term security. Code-based cryptography relies on the difficulty of decoding a general linear code, a problem that has remained resistant to cryptanalysis since the 1970s.

Standardized Algorithms: NIST FIPS 203, 204, and 205

NIST officially released the first set of post-quantum standards in 2024. These represent the culmination of an eight-year global competition to identify the most robust algorithms.

| Standard | Algorithm Name | Primary Use Case |

|---|---|---|

| FIPS 203 | ML-KEM (formerly Kyber) | General encryption and key exchange for web traffic (TLS). |

| FIPS 204 | ML-DSA (formerly Dilithium) | General-purpose digital signatures for identity and document signing. |

| FIPS 205 | SLH-DSA (formerly SPHINCS+) | Stateless hash-based signatures used as a backup for ML-DSA. |

PQC vs. Quantum Key Distribution (QKD)

It is a common misconception that PQC and QKD are the same.

- PQC is a software-based solution that uses new mathematical formulas on existing fiber, satellite, and cellular networks.

- QKD is a hardware-based solution that uses the laws of physics (quantum mechanics) to detect eavesdropping on a physical link. While QKD is highly secure, it requires specialized, expensive hardware and is difficult to scale across the global internet compared to PQC.

Get your quantum readiness assessment

The assessment includes:

- Overview of your cryptographic landscape

- Quantum-safe deployment recommendations

- Guidance for securing legacy apps & infrastructure

Get my assessment

Preparing for the Post-Quantum Transition

Migration to PQC is not a "drop-in" replacement. It requires a fundamental re-evaluation of how an organization manages its cryptographic lifecycle.

Establishing Crypto-Agility

Crypto-agility is the ability of a system to quickly switch between encryption algorithms without requiring massive changes to the underlying infrastructure.

Modern security leaders are prioritizing vendors that support modular cryptographic libraries. This flexibility is essential because if a specific PQC algorithm is found to have a flaw in five years, agile organizations can rotate to a different NIST-approved standard overnight.

"Organizations that practice crypto agility should be able to turn off the use of weak cryptographic algorithms quickly when a vulnerability is discovered and adopt new cryptographic algorithms without making significant changes to infrastructures or suffering from unnecessary disruptions."

- NIST, Considerations for Achieving Crypto Agility - Strategies and Practices

Inventorying Assets across the Attack Surface

You cannot protect what you do not know exists. Organizations must perform a cryptographic audit to identify where RSA and ECC are used in their environments. Unit 42 research indicates that many organizations struggle with visibility into "shadow" certificates and legacy applications that use hardcoded, non-compliant encryption.

Hybrid Cryptography: The Interim Security Model

During the transition, many experts recommend a hybrid approach. This involves "wrapping" traditional ECC or RSA encryption inside a post-quantum ML-KEM tunnel. If one layer is broken, the other still provides protection. This ensures that the new, relatively unproven PQC algorithms do not introduce a single point of failure.

PQC Challenges and Implementation Pitfalls

Transitioning to PQC introduces significant technical, operational, and governance hurdles that practitioners must address during the planning phase.

| Challenge | Why It Matters |

|---|---|

| Cryptographic visibility | Many organizations lack a complete inventory of cryptographic assets |

| Performance impact | Some PQC algorithms have larger keys, signatures, or computational requirements |

| Interoperability | Systems must support compatible algorithms, protocols, and certificate formats |

| Legacy systems | Older applications and devices may not support PQC updates |

| Vendor dependency | Third-party software, hardware, and cloud providers must support PQC migration |

| Certificate management | PKI environments may need new certificate profiles and lifecycle processes |

| Policy updates | Security, procurement, and compliance policies must reflect PQC requirements |

| Testing complexity | PQC changes can affect latency, handshakes, storage, and compatibility |

Increased Computational Overhead and Latency

PQC algorithms are generally more computationally intensive than their classical counterparts. ML-KEM, for example, requires more CPU cycles for key generation. For high-frequency trading or low-power IoT devices, this latency can impact performance and battery life.

Large Key and Signature Sizes

One of the biggest challenges is the size of the data being sent. RSA-2048 keys are relatively small, but PQC keys and signatures can be several kilobytes larger.

This increase can cause issues with network protocols like TLS, where the initial "handshake" might exceed the standard Maximum Transmission Unit (MTU), leading to packet fragmentation and dropped connections.

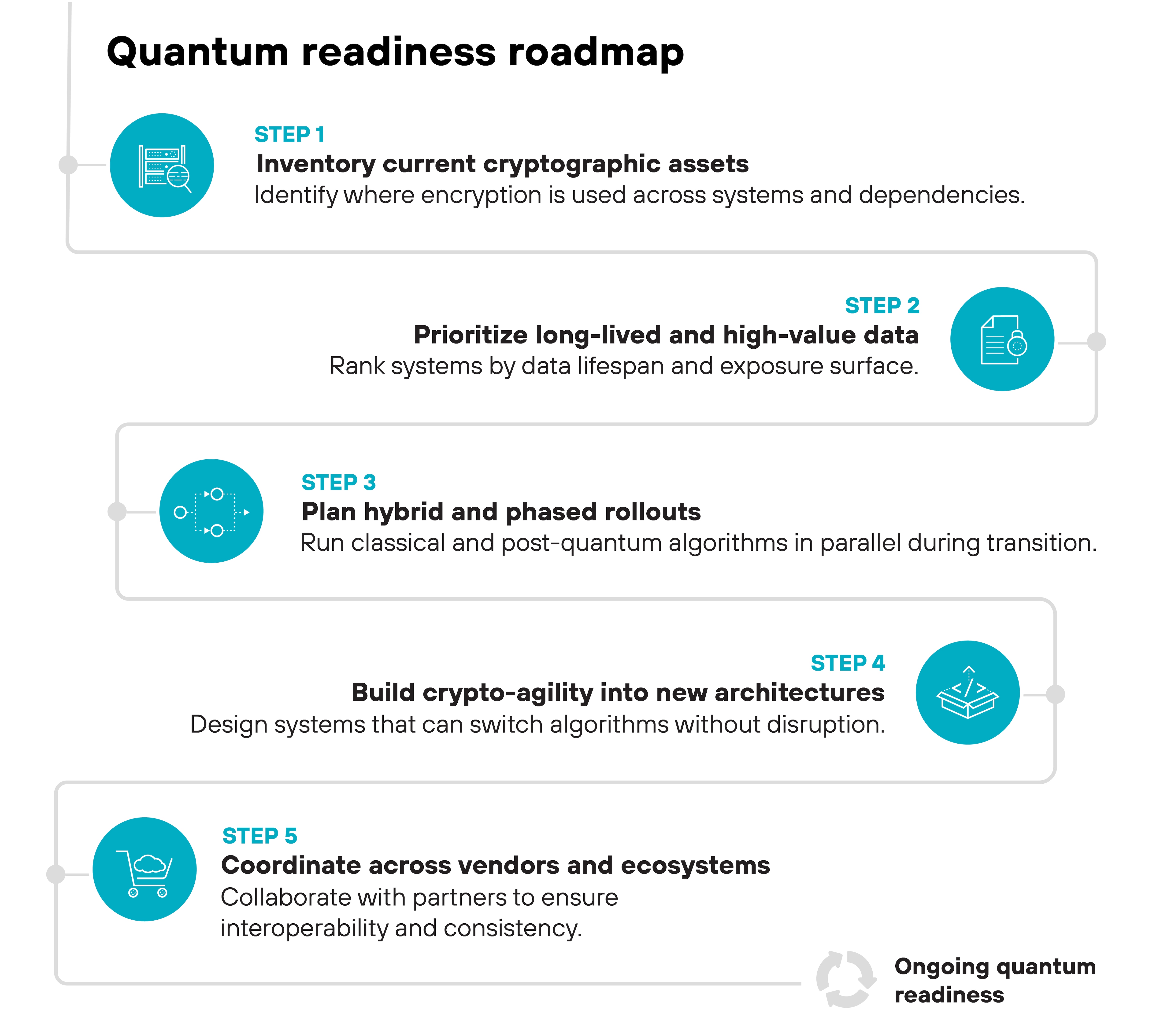

How Can Organizations Prepare for PQC?

Organizations can prepare for post-quantum cryptography by building a crypto-agile security program.

Recommended steps include:

- Create a cryptographic inventory: Document algorithms, protocols, certificates, keys, libraries, hardware security modules, applications, APIs, and third-party dependencies.

- Classify data by confidentiality lifespan: Identify data that must remain protected for years or decades.

- Map cryptography to business-critical systems: Prioritize systems that support identity, financial transactions, regulated data, critical infrastructure, software updates, and customer-facing services.

- Track vendor PQC readiness: Ask vendors how they support NIST PQC standards, hybrid modes, certificate migration, and future algorithm updates.

- Test PQC in controlled environments: Evaluate performance, interoperability, latency, certificate behavior, and operational impact before production deployment.

- Update security policies and procurement standards: Require crypto-agility, PQC support, and cryptographic transparency in new technology purchases.

- Plan phased migration: Start with high-risk data flows, long-lived sensitive data, public-facing systems, identity infrastructure, and systems with long replacement cycles.

Get your quantum readiness assessment

The assessment includes:- Overview of your cryptographic landscape

- Quantum-safe deployment recommendations

- Guidance for securing legacy apps & infrastructure

Post-Quantum Cryptography FAQs

Estimates vary, but many experts point to a 10-to-15-year window. However, the HNDL threat makes PQC an immediate requirement for data with a long shelf-life.

Yes. While Grover’s algorithm can speed up attacks on symmetric encryption, doubling the key size to 256 bits provides sufficient security against foreseeable quantum threats.

In most cases, no. PQC is designed to run on existing classical computers, servers, and smartphones. Some older, resource-constrained IoT devices may require hardware acceleration to handle the new math.

NIST selected these algorithms specifically because they are difficult for both classical and quantum computers. However, like any new math, they undergo constant "stress testing" by the global research community.

The first step is a cryptographic inventory. You must identify where your high-value data is encrypted with vulnerable algorithms like RSA and prioritize those systems for a hybrid-PQC upgrade.