- Kubernetes: How to Implement AI-Powered Security

- What Is Container Registry Security?

- What Is Container Orchestration?

-

Managing Permissions with Kubernetes RBAC

- Kubernetes RBAC Defined

- Why Is RBAC Important for Kubernetes Security?

- RBAC Roles and Permissions in Kubernetes

- How Kubernetes RBAC Works

- The Role of RBAC in Kubernetes Authorization

- Common RBAC Permissions Risks and Vulnerabilities

- Kubernetes RBAC Best Practices and Recommendations

- Kubernetes and RBAC FAQ

- What Is Container Runtime Security?

- What Is Kubernetes Security?

-

Multicloud Management with Al and Kubernetes

- Multicloud Kubernetes Defined

- How Does Kubernetes Facilitate Multicloud Management?

- Multicloud Management Using AI and Kubernetes

- Key AI and Kubernetes Capabilities

- Strategic Planning for Multicloud Management

- Steps to Manage Multiple Cloud Environments with AI and Kubernetes

- Multicloud Management Challenges

- Kubernetes Multicloud Management with AI FAQs

-

What Is Kubernetes?

- Kubernetes Explained

- Kubernetes Architecture

- Nodes: The Foundation

- Clusters

- Pods: The Basic Units of Deployment

- Kubelet

- Services: Networking in Kubernetes

- Volumes: Handling Persistent Storage

- Deployments in Kubernetes

- Kubernetes Automation and Capabilities

- Benefits of Kubernetes

- Kubernetes Vs. Docker

- Kubernetes FAQs

-

What Is Kubernetes Security Posture Management (KSPM)?

- Kubernetes Security Posture Management Explained

- What Is the Importance of KSPM?

- KSPM & the Four Cs

- Vulnerabilities Addressed with Kubernetes Security Posture Management

- How Does Kubernetes Security Posture Management Work?

- What Are the Key Components and Functions of an Effective KSPM Solution?

- KSPM Vs. CSPM

- Best Practices for KSPM

- KSPM Use Cases

- Kubernetes Security Posture Management (KSPM) FAQs

- What Is Orchestration Security?

-

How to Secure Kubernetes Secrets and Sensitive Data

- Kubernetes Secrets Explained

- Importance of Securing Kubernetes Secrets

- How Kubernetes Secrets Work

- How Do You Store Sensitive Data in Kubernetes?

- How Do You Secure Secrets in Kubernetes?

- Challenges in Securing Kubernetes Secrets

- What Are the Best Practices to Make Kubernetes Secrets More Secure?

- What Tools Are Available to Secure Secrets in Kubernetes?

- Kubernetes Secrets FAQ

-

Kubernetes and Infrastructure as Code

- Infrastructure as Code in the Kubernetes Environment

- Understanding IaC

- IaC Security Is Key

- Kubernetes Host Infrastructure Security

- IAM Security for Kubernetes Clusters

- Container Registry and IaC Security

- Avoid Pulling “Latest” Container Images

- Avoid Privileged Containers and Escalation

- Isolate Pods at the Network Level

- Encrypt Internal Traffic

- Specifying Resource Limits

- Avoiding the Default Namespace

- Enable Audit Logging

- Securing Open-Source Kubernetes Components

- Kubernetes Security Across the DevOps Lifecycle

- Kubernetes and Infrastructure as Code FAQs

- What Is the Difference Between Dockers and Kubernetes?

- Securing Your Kubernetes Cluster: Kubernetes Best Practices and Strategies

-

What Is a Host Operating System (OS)?

- The Host Operating System (OS) Explained

- Host OS Selection

- Host OS Security

- Implement Industry-Standard Security Benchmarks

- Container Escape

- System-Level Security Features

- Patch Management and Vulnerability Management

- File System and Storage Security

- Host-Level Firewall Configuration and Security

- Logging, Monitoring, and Auditing

- Host OS Security FAQs

- What Is Docker?

- What Is a Container?

- What Is Containerization?

What Is Container Security?

Container security involves protecting containerized applications and their infrastructure throughout their lifecycle, from development to deployment and runtime. It encompasses vulnerability scanning, configuration management, access control, network segmentation, and monitoring. Container security aims to maximize the intrinsic benefits of application isolation while minimizing risks associated with resource sharing and the potential attack surface. By adhering to best practices and using specialized security tools, organizations can safeguard their container environment against unauthorized access and data breaches while maintaining compliance with industry regulations.

Container Security Explained

Containers give us the ability to leverage microservice architectures and operate with greater speed and higher portability. Containers also introduce intrinsic security benefits. Workload isolation, application abstraction, and the immutable nature of containers in fact factor heavily into their adoption.

Kubernetes, too, provides built-in security features. Administrators can define role-based access control (RBAC) policies to help guard against unauthorized access to cluster resources. They can configure pod security policies and network policies to prevent certain types of abuse on pods and the network that connects them. Administrators can impose resource quotas to mitigate the disruption caused by an attacker who compromises one part of a cluster. With resource quotas in place, for example, an attacker won’t be able to execute a denial-of-service attack by depriving the rest of the cluster resources needed to run.

But as you may have guessed, no technology is immune to malicious activities. Container security, the technologies and practices implemented to protect not only your applications but also your containerized environment — from hosts, runtimes, and registries to orchestration platforms and underlying systems — is vital.

Video: Detect vulnerabilities in container images and ensure security and compliance throughout the development lifecycle with container scanning.

Background

Container security reflects the changing nature of IT architecture. The rise of cloud-native computing has fundamentally altered how we create applications. Keeping pace with technology demands we adjust our approach to securing them.

In the past, cybersecurity meant protecting a single perimeter. Containers render this concept obsolete, having added multiple layers of abstraction that require specialized tools to interpret, monitor, and protect our containerized environments.

The container ecosystem can be difficult to understand, given the plethora of tools and the unique problems they solve compared to traditional platforms. At the same time, the widespread adoption of container technologies gives us an opportunity to shift-left — securing containers from the earliest stages in the CI/CD pipeline to deployment and runtime.

But before diving into the details of container security, it’s necessary to understand the platforms used for managing containers. We’ll focus on one of the biggest and most well-known platforms, Kubernetes.

What Is Kubernetes?

Kubernetes is one of the leading orchestration platforms that helps optimize and implement a container-based infrastructure. More specifically, it’s an open-source platform used for managing containerized workloads by automating processes such as application development, deployment, and management.

As a widely adopted open-source platform, securing Kubernetes is crucial for organizations deploying containerized applications. Organizations must establish a secure environment, particularly when incorporating open-source code into third-party applications. Kubernetes, with its extensive ecosystem and numerous integrations for managing containers, enables the creation of automated, systematic processes that integrate security into the core of its build and deployment pipeline. By leveraging Kubernetes' native features, such as RBAC, pod security policies, and network policies, organizations can build and maintain a solid security posture with a resilient container orchestration infrastructure.

Benefits of Containers

To put it simply, containers make building, deploying, and scaling cloud-native applications easier than ever. For cloud-native app developers, the top-of-mind benefits of containers include:

- Eliminating friction: Developers avoid much of the friction associated with moving application code from testing through to production, since the application code packaged as containers can run anywhere.

- Single source of truth for application development: All the dependencies associated with the application are included within the container. This enables the application to run easily and identically across virtual machines, bare metal servers, and the public cloud.

- Faster build times: The flexibility and portability of containers enables developers to make previously unattainable gains in productivity.

- Confidence for developers: Developers can deploy their applications with confidence, knowing their application or platform will run the same across all operating systems.

- Enhanced collaboration: Multiple teams using containers can work on individual parts of an app or service without disrupting the code packaged in other containers.

Like any IT architecture, cloud-native applications require security. Container environments bring with them a range of cybersecurity challenges targeting their images, containers, hosts, runtimes, registries, and orchestration platforms — all of which need to be addressed.

Understanding the Attack Surface

Consider Kubernetes’ sprawling, multilayered framework. Each layer — from code and containers to clusters and third-party cloud services — poses a distinct set of security challenges.

Securing Kubernetes deployments requires securing the underlying infrastructure (nodes, load balancers, etc.), configurable components, and the applications that run in the cluster — including maintaining the posture of underlying nodes and controlling access to the API and Kubelet. It’s alsoimportant to prevent malicious workloads from running in the cluster and isolate workload communication through strict network controls.

Container runtimes may be subject to coding flaws that enable privilege escalation within a container. The Kubernetes API server could be improperly configured, giving attackers the opportunity to access resources assumed to be locked down. Vulnerabilities enabling privilege escalation attacks could exist within a containerized application or within the operating systems running on Kubernetes nodes.

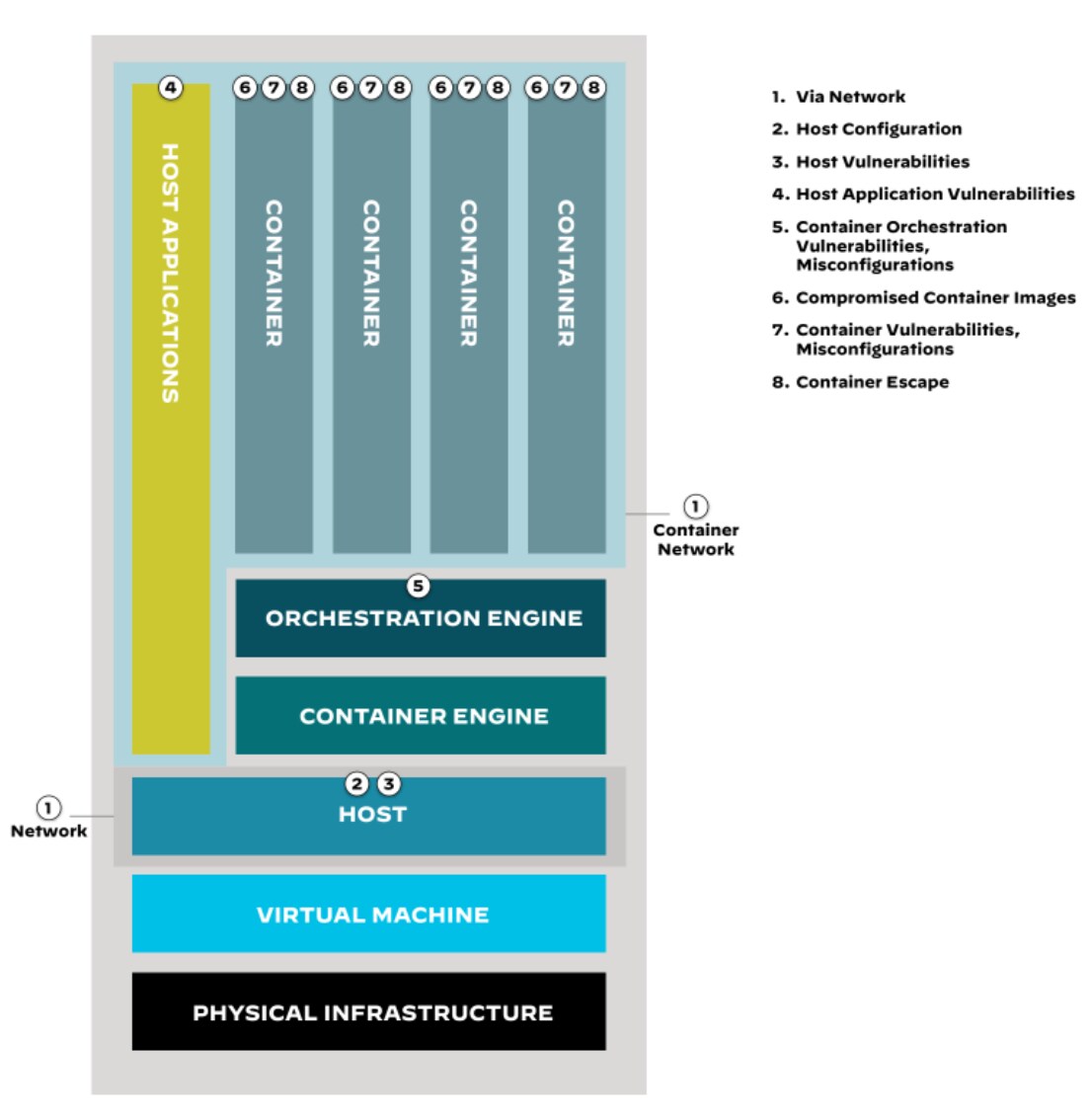

Figure 1: Anatomy of the container attack surface

In this system, an issue at one layer is amplified when another layer has a security issue.

And containers can, of course, harbor vulnerabilities. At the same time, containers can obscure visibility. Imagine a single insecure image instantiated numerous times as separate running containers. What had been a single crack is now a vast network of fissures in the fortress.

The imperative to maintain visibility into system operations and security as you increasingly deploy containers becomes increasingly challenging. And that’s just maintaining visibility, one of countless objectives.

Figure 1, with expanded details outlined in Table 1, offers a starting point for understanding the attack surface of containerized applications.

It’s important to note that the depiction is simplified. In reality, attackers have numerous inroads to explore in their attempts to exploit vulnerabilities in containerized applications. Defending this tech stack isn’t necessarily more daunting than securing other environments and technologies. Containerization merely presents unique security considerations that organizations need to address for a secure and resilient infrastructure.

| Attack Surface Area | Attack Vector | Description | Example |

| Via Network | Malicious Network Traffic | Exploiting network vulnerabilities or misconfigurations to gain access to the container environment. | Scanning for open ports and exploiting misconfigurations to gain access to worker nodes. |

| Host Configuration | Misconfigured Host System | Exploiting misconfigurations in the host operating system to gain access to the container environment. | Discovering insecure file permissions to access sensitive files, such as container configuration files. |

| Host Vulnerabilities | Unpatched Host Vulnerabilities | Exploiting vulnerabilities in the host operating system to gain access to the container environment. | Identifying and exploiting unpatched kernel vulnerabilities to gain root privileges on worker nodes. |

| Host Application Vulnerabilities | Unpatched Host Application Vulnerabilities | Exploiting vulnerabilities in host applications to gain access to the container environment. | Targeting older versions of Docker with vulnerabilities to gain root privileges on worker nodes. |

| Container Orchestration Vulnerabilities and Misconfigs | Misconfigured Container Orchestration | Exploiting misconfigurations in the container orchestration system to gain access to the container environment. | Taking advantage of insecure access control policies in Kubernetes clusters to access pods and services. |

| Compromised Container Images | Attacker Gains Access to Container Image Build Process | Compromising the container image build process to inject malicious code into container images. | Exploiting vulnerabilities in CI/CD pipelines to inject malicious code during the container image build process. |

| Container Vulnerabilities and Misconfigs | Unpatched Container Vulnerabilities | Exploiting vulnerabilities in the container itself to gain access to the container environment. | Targeting unpatched vulnerabilities in popular applications running within containers to gain access. |

| Container Escape | Attacker Gains Privileged Access to Container | Breaking out of the container's isolation and gaining access to the host system. | Exploiting vulnerabilities in the container runtime or abusing host system misconfigurations to gain root privileges on the host system. |

Table 1: Breaking down the container attack surface

Fortunately, each layer of the attack surface can be fortified through design and process considerations, as well as native and third-party security options to reduce the risk of compromised workloads. You’ll need a multifaceted strategy, but our objective in this section of the guide is to provide you with just that.

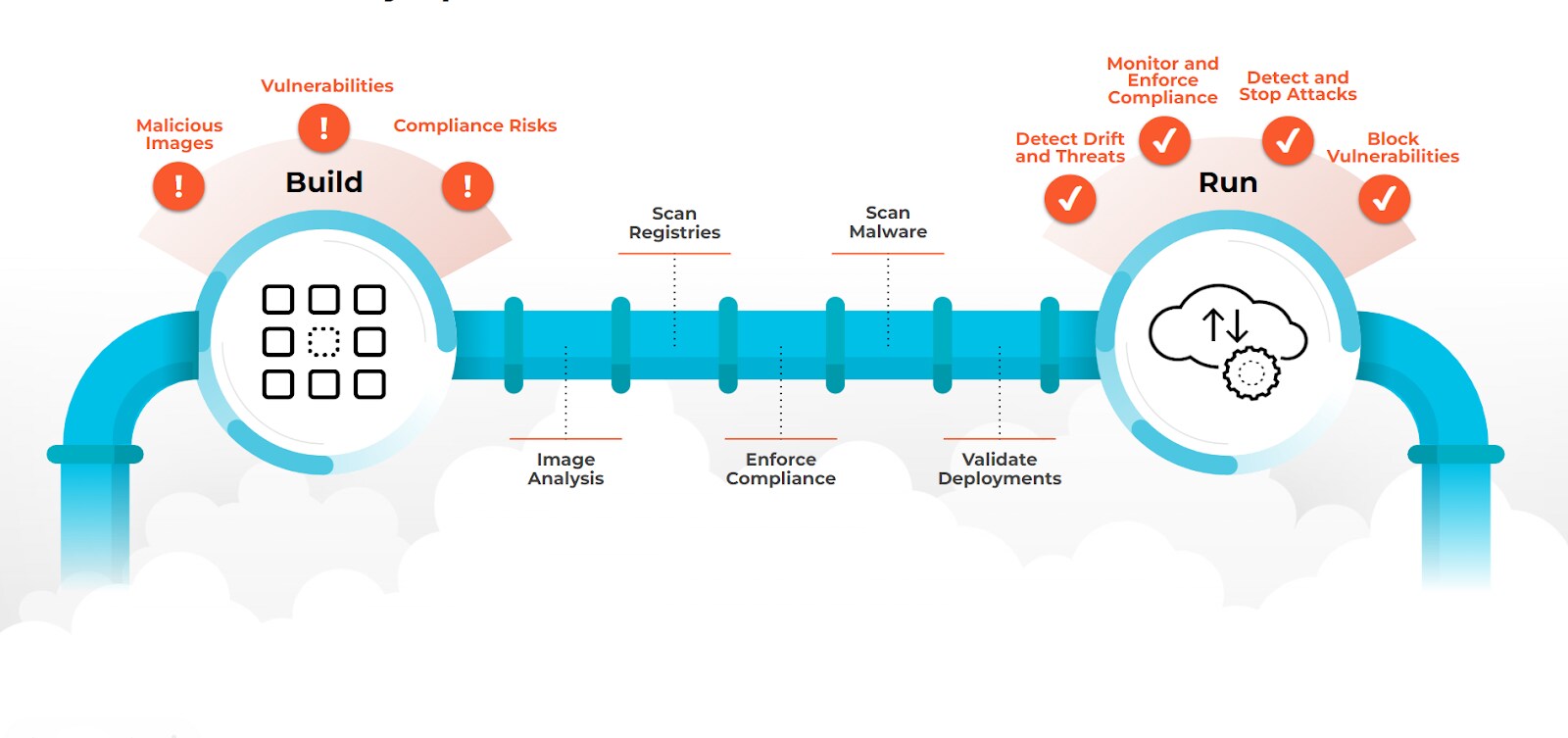

Figure 2: Container security spans the full software development lifecycle

How to Secure Containers

Container users need to ensure they have purpose-built, full-stack security to address vulnerability management, compliance, runtime protection, and network security requirements of their containerized applications.

Container Network Security

Containerized applications face the same risks as bare metal and VM-based apps, such as cryptojacking, ransomware, and BotNet C2. Container network security proactively restricts unwanted communication and prevents threats from attacking your applications via a multitude of strategies. Key components of network security involve microsegmentation, access control, encryption, and policies to maintain a secure and resilient environment. Continuous monitoring, logging, and regular audits help identify and rectify potential security gaps, as does timely patching to keep your platforms and infrastructure up to date.

While shift-left security tools offer deploy-time protection against known vulnerabilities, containerized next-gen firewalls guard against unknown and unpatched vulnerabilities. Performing Layer 7 deep-packet inspection and scanning all allowed traffic, they identify and prevent malware from entering and spreading within the cluster and block malicious outbound connections used for data exfiltration and command and control (C2) attacks. Identity-based microsegmentation helps restrict the communication between applications at Layer 3 and 4.

Container Runtime Security

Cloud-native runtime security is the process of identifying new vulnerabilities in running containers and securing the application against them. Organizations using containers should leverage enhanced runtime protection to establish the behavioral baselines upon which anomaly detection relies. Runtime security can identify and block malicious processes, files, and network behavior that deviates from a baseline.

Using a defense-in-depth strategy to prevent Layer 7 attacks, such as the OWASP Top 10, organizations should implement runtime protection with web application and API security in addition to container network security via containerized next-gen firewalls.

Container Register Security

Getting security into the container build phase means shifting left instead of reactively at runtime. Build phase security should focus on removing vulnerabilities, malware, and insecure code. Since containers are made of libraries, binaries, and application code, it’s critical to secure your container registries.

The first step to container registry security is to establish an official container registry for your organization. Without question, one or more registries already exists. It’s the security team’s job to find them and ensure they’re properly secured, which includes setting security standards and protocols. The overarching aim of container registry security standards should center on creating trusted images. To that end, DevOps and security teams need to align on policies that, foremost, prevent containers from being deployed from untrusted registries.

Intrusions or vulnerabilities within the registry provide an easy opening for compromising running applications. Continuously monitoring registries for change in vulnerability status remains a core security requirement. Other requirements include locking down the server that hosts the registry and using secure access policies.

Container Orchestration Security

Container orchestration security is the process of enacting proper access control measures to prevent risks from overprivileged accounts, attacks over the network, and unwanted lateral movement. By leveraging identity access management (IAM) and least-privileged access, where Docker and Kubernetes activity is explicitly whitelisted, security and infrastructure teams can ensure that users only perform commands based on appropriate roles.

Additionally, organizations need to protect pod-to-pod communications, limit damage by preventing attackers from moving laterally through their environment, and secure any frontend services from cyber attack.

Host Operating System (OS) Security

Host OS security is the practice of securing your operating system (OS) from a cyberattack. As cloud-native app development technology grows, so does the need for host security.

The OS that hosts your container environment is perhaps the most important layer when it comes to security. An attack that compromises the host environment could give intruders access to all other areas in your stack. That’s why hosts need to be scanned for vulnerabilities, hardened to meet CIS Benchmarks, and protected against weak access controls (Docker commands, SSH commands, sudo commands, etc.).

Container Security Solutions

Securing your containerized environment requires a layered approach to address potential vulnerabilities and threats. In recent years, container security solutions organizations can rely on to safeguard their containerized applications and infrastructure throughout the development, deployment, and runtime stages have taken on greater sophistication and capabilities. Modern security tooling effectively minimizes risks of data breaches and data leaks, promoting compliance and maintaining secure environments while accelerating DevSecOps adoption.

Container Monitoring

The ability to monitor your registry for vulnerabilities is essential to maintaining container security. Because developers are continually ripping and replacing containers, monitoring tools that enable security teams to apply time-series stamps to containers are critical when trying to determine what happened in a containerized environment.

Popular tools for container monitoring include Prometheus, Grafana, Sumo Logic, and Cortex Cloud. Cortex Cloud offers runtime threat detection and anomaly analysis for both cloud-native and traditional applications. It leverages machine learning and behavioral analysis to identify suspicious activity across the entire container lifecycle, from build to runtime.

Container Scanning Tools

Containers need to be continuously scanned for vulnerabilities, both before being deployed in a production environment and after they have been replaced. It’s too easy for developers to mistakenly include a library in a container that has known vulnerabilities. It’s also important to remember that new vulnerabilities are discovered almost daily. That means what may seem like a perfectly safe container image today could wind up as the vehicle through which all kinds of malware are distributed tomorrow. That’s why maintaining container image trust is a central component of container scanning tools.

Container scanning tools include Aqua Security, Anchore, Clair, and Cortex Cloud. Cortex Cloud provides deep-layer vulnerability scanning for container images in registries and during CI/CD pipelines. It detects known vulnerabilities, misconfigurations, and malware, helping you build secure containers from the start.

Container Network Security Tools

Once deployed, containers need to be protected from the constant attempts to steal proprietary data or compute resources. Containerized next-generation firewalls, web application and API security (WAAS), and microsegmentation tools inspect and protect all traffic entering and exiting containers (north-south and east-west), granting full Layer 7 visibility and control over the Kubernetes environment. Furthermore, the containerized firewalls dynamically scale with the rapidly changing size and demands on the container infrastructure, guaranteeing security and bandwidth for business operations.

Network security tools include Calico, Flannel, CNI plugins (e.g., Istio, Cilium), Kubernetes NetworkPolicy, and Cortex Cloud. Cortex Cloud integrates with container orchestration platforms like Kubernetes to provide network threat detection. It secures east-west traffic between containers and prevents unauthorized lateral movement within your environment.

Policy Engines

Modern tools make it possible for cloud security teams to define policies that essentially determine who and what is allowed to access any given microservice. Organizations need a framework for defining those policies and making sure that they are consistently maintained across a highly distributed container application environment.

Popular policy engines include Cilium, OPA Gatekeeper, Neutrino, Kubernetes Network Policy API, and Cortex Cloud. Cortex Cloud enforces security policies across your container deployments, including network access control, resource limitations, and image signing. This ensures consistent security posture and compliance with your organization's standards.

Choosing the Right Solutions

When selecting a solution to secure your containerized environment, consider your organization’s needs and areas of risk. Do you need advanced threat detection, vulnerability management, or strict policy enforcement? Evaluate integration with your existing tools and infrastructure. Seamless integration with development pipelines, orchestration platforms, and SIEM systems is game changing.

Remember, effective container security goes beyond individual tools. Implementing a layered approach with continuous monitoring, proactive scanning, strong policies, and reliable network security will significantly enhance your containerized environment's resilience against threats.

Container Security FAQs

A policy engine is a software component that enables DevSecOps teams to define, manage, and enforce policies governing access and usage of resources, such as applications, networks, and data.

Policy engines evaluate incoming requests against predefined rules and conditions, making decisions based on those policies. They help ensure compliance, enhance security, and maintain control over resources. In the context of containerized environments, policy engines play a crucial role in consistently maintaining access and security policies across distributed applications and microservices, helping manage and automate policy enforcement in complex, dynamic infrastructures.

The MITRE ATT&CK Matrix is a comprehensive, globally accessible knowledge base of cyber adversary tactics and techniques. It is developed and maintained by MITRE, a not-for-profit organization that operates research and development centers sponsored by the U.S. government. ATT&CK stands for Adversarial Tactics, Techniques, and Common Knowledge.

The matrix serves as a framework for understanding, categorizing, and documenting the various methods that cyber adversaries use to compromise systems, networks, and applications. It’s designed to help security teams, researchers, and organizations in various stages of the cybersecurity lifecycle, including threat detection, prevention, response, and mitigation.

The MITRE ATT&CK Matrix is organized into a set of categories, called tactics, representing different stages of an adversary's attack lifecycle. Each tactic contains multiple techniques that adversaries use to achieve their objectives during that stage. The techniques are further divided into subtechniques, which provide more detailed information about specific methods and tools used in cyberattacks.

A security context is a set of attributes or properties related to the security settings of a process, user, or object within a computing system. In the context of containerized environments, a security context defines the security and access control settings for containers and pods, such as user and group permissions, file system access, privilege levels, and other security-related configurations.

Kubernetes allows you to set security contexts at the pod level or the container level. By configuring security contexts, you can control the security settings and restrictions for your containerized applications, ensuring that they run with the appropriate permissions and in a secure manner.

Some of the key attributes that can be defined within a security context include:

- User ID (UID) and group ID (GID): These settings determine the user and group that a container or pod will run as, thereby controlling access to resources and system capabilities.

- Privilege escalation control: This setting determines whether a process within a container can gain additional privileges, such as running as a root user. By disabling privilege escalation, you can limit the potential impact of a compromised container.

- File system access: Security contexts allow you to define how containers can access the file system, including read-only access or mounting volumes with specific permissions.

- Linux capabilities: These settings control the specific capabilities that a container can use, such as network bindings, system time settings, or administration tasks.

- SELinux context: Security contexts can be used to define the SELinux context for a container or pod, enforcing mandatory access control policies and further isolating the container from the host system.

By properly configuring security contexts in Kubernetes, you can enhance the security of your containerized applications, enforce the principle of least privilege, and protect your overall system from potential security risks.

Code security refers to the practices and processes implemented to ensure that software code is written and maintained securely. This includes identifying and mitigating potential vulnerabilities and following secure coding best practices to prevent security risks. Code security encompasses various aspects, such as:

- Static Application Security Testing (SAST): Analyzing source code, bytecode, or binary code to identify potential security vulnerabilities without executing the code.

- Dynamic Application Security Testing (DAST): Testing running applications to identify security vulnerabilities by simulating attacks and analyzing the application's behavior.

- Software Composition Analysis: Scanning and monitoring the dependencies (libraries, frameworks, etc.) used in your code to identify known vulnerabilities and ensure they are up-to-date.

- Secure Coding Practices: Following guidelines and best practices (e.g., OWASP Top Ten Project) to write secure code and avoid introducing vulnerabilities.

Policies are specific security rules and guidelines used to enforce security requirements within a Kubernetes environment, while IaC is a broader practice for managing and provisioning infrastructure resources using code. Both can be used together to improve security, consistency, and automation within your Kubernetes environment.

Using IaC, you can define and manage security configurations like network policies, firewall rules, and access controls as part of your infrastructure definitions. For example, you can include Kubernetes network policies, ingress and egress configurations, and role-based access control (RBAC) policies in your Kubernetes manifests, which are then managed as infrastructure as code.

Tools like Terraform, CloudFormation, and Kubernetes manifests allow you to manage infrastructure resources and security configurations in a consistent and automated manner. By incorporating security measures into your IaC definitions, you can improve the overall security of your container and Kubernetes environment and ensure adherence to best practices and compliance requirements.

Policy as code (PaC) involves encoding and managing infrastructure policies, compliance, and security rules as code within a version-controlled system. PaC allows organizations to automate the enforcement and auditing of their policies, ensuring that their infrastructure is built and maintained according to the required standards. By integrating these policies into the policy as code process or as part of the infrastructure build, organizations can ensure that their tooling aligns with the necessary standards and best practices.

Alert disposition is a method of specifying your preference for when you want an alert to notify you or an anomaly. Settings include conservative, moderate, and aggressive. Preferences are based on the severity of the issues — low, medium, high.

- Conservative generates high severity alerts.

- Moderate generates high and medium severity alerts.

- Aggressive generates high, medium, and low severity alerts.

Secure identity storage refers to solutions and mechanisms designed to safely store sensitive information, such as passwords, cryptographic keys, API tokens, and other secrets, in a highly protected and encrypted manner. Secret vaults and hardware security modules (HSMs) are two common examples of secure identity storage.

Secret Vaults are secure software-based storage systems designed to manage, store, and protect sensitive data. They employ encryption and access control mechanisms to ensure that only authorized users or applications can access the stored secrets. Examples of secret vaults include HashiCorp Vault, Azure Key Vault, and AWS Secrets Manager.

Secret Vault Features

- Encryption at rest and in transit

- Fine-grained access control

- Audit logging and monitoring

- Key rotation and versioning

- Integration with existing identity and access management (IAM) systems

Hardware security modules (HSMs) are dedicated, tamper-resistant, and highly secure physical devices that safeguard and manage cryptographic keys, perform encryption and decryption operations, and provide a secure environment for executing sensitive cryptographic functions. HSMs are designed to protect against both physical and logical attacks, ensuring the integrity and confidentiality of the stored keys. Examples of HSMs include SafeNet Luna HSM, nCipher nShield, and AWS CloudHSM.

Key Features of HSMs

- FIPS 140-2 Level 3 or higher certification (a U.S. government standard for cryptographic modules)

- Secure key generation, storage, and management

- Hardware-based random number generation

- Tamper detection and protection

- Support for a wide range of cryptographic algorithms

Both secret vaults and HSMs aim to provide a secure identity storage solution, reducing the risk of unauthorized access, data breaches, and other security incidents. Choosing between them depends on factors such as security requirements, budget, and integration needs.